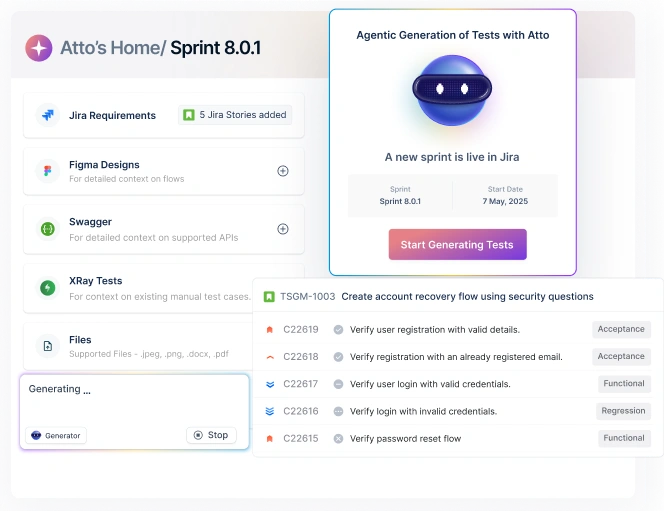

Prompt Templates for Pro-level test cases

Get prompt-engineered templates that turn requirements into structured test cases, edge cases, and negatives fast every time.

ETL Test Automation is critical as it enables us to ensure that data is correct and helps our organization to trust that data. It is a methodology to test the ETL process by validating and comparing source data to destination data. In simple words, we can say that ETL Test Automation helps us to ensure the quality and accuracy of extracted, transformed, and loaded data. But how important is ETL Test Automation? In this blog, let’s understand ETL test automation, types of ETL testing, the need for ETL test automation, and Challenges in ETL Testing.

“There are 5 exabytes of information created between the dawn of civilization through 203, but that much information is now created every two days.” – Eric Schmidt.

Table Of Contents

- 1 What is ETL?

- 2 Why do we use ETL?

- 3 Comparison with ELT

- 4 Need for ETL Test Automation

- 5 What are the Types of ETL Tests?

- 6 ETL Test Automation Processes

- 7 Challenges of ETL Testing

- 8 Test Automation in ETL and where we stand today

- 9 Best ETL Test Automation Testing Tools

- 10 Wrapping it up!

- 11 Frequently Asked Questions

What is ETL?

When we talk about data processing (a part of it though), we are generally not talking about a single process but a combination. One such pipeline of the process is called ETL. The term ETL stands for Extract, Transform, and Load. These three processes are concerned with data and are written as well as performed in the same sequence. A high-level and highly technical diagram illustrated on Wikipedia shows pre and post-steps of ETL as follows:

The middle part is the complete ETL process with its phases and pipeline. Magnifying the same area (as it is what we are most interested in), we get the following sequence:

It is also a common phenomenon these days to launch different applications for different devices into the market. For instance, if I consider Amazon Music, you might be able to encounter an android based application, a windows-based application, and a web application that can provide you with the same experience (except for the native features).

Not only this, you will get consistent information across all these applications. For instance, you can play a song on your mobile application and later find the same song in the recently played section of the web application. This is a classic example of cross-platform applications.

Such a phenomenon widens the sources that contribute to the data. Today, when 5 billion people are accessing the internet, they are expected to generate data that is impossible to perform operations on manually. So, ETL systems are made in a way that is capable of fetching the data from these sources and processing it further. When the system is complete and working, it reaps out benefits that we will discuss in the later section. Before that, let’s take a brief look at the individual components of the ETL system.

Extract

The first processing that we do on the data is extraction. This process is defined technically as “extracting data from homogeneous or heterogenous sources.” The term “homogenous or heterogenous” signifies that there can be a similar type of data, or it can differ according to the source from which it is being extracted. For example, if one source is an IoT device sensor, it will float data differently than what a web applicationwould send.

The design and robustness of the extract method play a vital role in the ETL process as it is the first stage that’s in contact with raw data (i.e., data directly saved by the device). The extract method is divided into three main types:

i. Partial extraction with update notification – The easiest and most performance-effective method of data extraction is partial extraction with an update notification. In this method, the system identifies and notifies the updated data to the system of the source location.

ii. Partial extraction without update notification – Some systems cannot provide an update notification as a trigger to start the process. However, they can point us to the updated records when explicitly asked. This is why we need to run the methods on pre-decided intervals from outside in such systems.

iii. Full extraction – When the above two types of systems are unavailable, we are left with a system that can neither update us on the changes nor point to changes when asked. In this case, we need to run full extraction, i.e., extracting the complete data. Then, we need to analyze the changes ourselves. For this, we need the last extracted file to compare the changes as we cannot forward the complete data which is mixed with duplicates and bring down the efficiency. This is the most inefficient system.

When we are done extracting the data in this process, it goes ahead to transformation.

Transform

Once our data is extracted, we arrive at the most critical phase of the ETL cycle – the transformation. The data we collect in the extract phase is generally raw data and cannot be used directly as extracted. It may have flaws, like duplicate data that can change the analytics side of the business.

The transformation of data includes applying certain techniques or rules and regulations to clean the data and make it meaningful or according to what our data warehouse would require. The steps may include but are not limited to validating the data, removing redundant data, formatting the data, etc.

The data is kept in the staging area until the transformation is complete.

Load

The final process to complete the ETL cycle is the loading. Once our transformations are done, the data is now compatible with the target database. It can be a data warehouse or a simple database. Although we work on big data, the target is the data warehouse, and the data is in bulk quantity. Therefore, completing the loading process may take a lot of time.

To optimize our system, we divide the loading process into three categories:

- Initial loading – This is the first time loading and requires more time.

- Incremental loading – Only loading the data that is unique and required to be loaded into the database. This is done to save time and is an efficient load method.

- Refresh – The refresh method loads complete data and replaces the previous data in the warehouse. This takes time and since a lot of data might be duplicated, it should only be done when highly required.

This completes our ETL process, and by the end of it, all our data is fed into the data warehouse.

Why Do We Use ETL?

At this point in this post, I am confident that you must have figured out the benefits of using ETL in the process. Definitely, I won’t bore you by writing long bulleted lists of infinite merits. But, we can point out a few major ones to be on the same page.

Data is flown in terabytes and petabytes, even in small-sized businesses. The amount is unimaginable for large businesses such as YouTube and Facebook. In such scenarios, manual inspection is not just hard but also impossible to perform.

ETL systems are faster and automated in nature. Their automation helps us apply logic and rules that automatically filters out the data, and most of the time, only focus on what’s not duplicated.

Today, due to ETL systems, we save a lot of time, work parallelly on multiple data sources and generate higher revenue. These things make this an essential component of data warehousing or data mining.

Comparison with ELT

Another similar concept of ELT exists in the market regarding data extraction, cleaning, and processing. The letters are abbreviated for similar processes here, i.e., extraction, loading, and transformation. However, the last functions are switched. In ELT, we perform loading first and then apply the transformation cycle.

In the big data world, ELT and ETL are the two processes that are often compared to each other as their methods and principles are the same; just the processes have switched. So, in this post, we cannot stamp out one of them as the guaranteed best method but rather leave it to you because it depends on the type of your organization.

So, what changes are observed in ELT as compared to ETL?

ELT loads the data first and transforms it later. Transformation is the most time taking process because of the methods involved. So, if you require data to work on the loaded system for analysis without worrying about the transformed data, loading first does make sense rather than just waiting for the transformation to complete.

Since ELT uses loading first before transforming and loading happens in the data warehouse, it makes sense to perform the transformation directly in the warehouse. This is not the case with ETL; the transformation is on a different server. A direct effect of this is that ELT becomes a faster pipeline than ETL.

ETL is an old practice. It became popular 50 years ago in the 1970s; therefore, there has been a lot of exploration and advancement in this. ELT, on the other hand, is extremely new and has very little documentation and exploration into depth. An organization might struggle to find experienced data management employees for ELT. This would not be the case with ETL.

Apart from these three main differences, there are many that you would find once you start using these pipelines. However, those would be the direct or indirect effects of the differences mentioned above.

Need for ETL Test Automation

Due to data size and the multiple processes involved, the amount of work demanded in data processing makes us look nowhere else but towards automation. The strength of automation in executing tasks faster is precisely what we need to complete a massive amount of work in a short time. Hence, ETL automation in development is not a rare sight in an organization.

A similar need arises in the testing department for ETL. Since so much of the responsibility is riding on top of ETL tools, ensuring they are always in the best possible shape is extremely important. Even if the ETL tools start ignoring a single fault such as not recognizing the duplicate data, it could cost millions or 100 times more than just doing it manually.

On the other hand, we may also observe a lot of inconsistencies in various copies of data. For example, how can we ensure that the data we get after the transformation is the same data used in the production? Such things can be answered by ETL test automation.

What Are the Types of ETL Tests?

Since the data is a secret to a lot of things and optimizations to it can move things faster, one generalized type of testing cannot cover all the scenarios. Therefore, in ETL test automation, we generally divide the testing paradigms and focus on each, with most of the time different testers assigned to them.

DATA Integration Testing

This part of ETL test automation ensures that the data is well integrated into the data warehouse from all the sources. If the data integration goes wrong, we may lose a large chunk of essential data.

DATA Quality Testing

Harvard Business Review reports that only 3% of the companies meet the data quality standards. This stat is extremely low considering the amount of information we fetch from the data in our analytical systems. If data quality is poor, our analytical results might not be as accurate as they seem.

Data quality testing covers (but is not limited to) the following essential parts:

- Testing if the final loaded data is error-free.

- Testing if the final data is not duplicated from any of the sources.

- Testing if the data loaded into the warehouse is consolidated.

- Testing if the data we are getting is accurate. A lot of the time, users may enter data just to fill it out as it is mandatory, or they expect a reward in return. This needs to be taken care of while testing for the quality of data.

You may also check data quality dimensions to check the focus areas of data quality.

Applying tests to each dimension can reap fruitful results automatically.

Performance Testing

Similar to the general performance testing of an application, we need to test the ETL part separately for performance. Here, by performance, we mean the progress of the complete pipeline and whether its metrics are satisfactory. This may include the servers and their time thresholds in delivering the results. The testers may also include scalability here, considering the future. However, this should not be the prime focus of performance testing in ETL test automation.

DATA Transformation Testing

As the name suggests, data transformation testing verifies the “transformation” part of the ETL. In this type of ETL automation testing, we verify whether the rules that we applied are transforming data correctly or not and generating the output as expected, i.e., according to the business rules.

Report Testing

Once the ETL process is run entirely, it generates a report for the employees to analyze the data or parameters the company has decided to put on. This report is an essential aspect of ETL as any fault may lead to a false understanding of the data and its quality. Testing it thoroughly is, therefore, an important task.

In the report testing part of ETL test automation, we analyze the content of the report, the analysis fields, the various logics applied (such as formulas and calculations), and whether they generate the visual representation according to the results.

We can also check if the values defined in the report match the actual values in the data. This way we can ensure that whatever we see when ETL runs is the real analysis without faults.

Apart from these main testing, you may find other testing types in ETL test automation such as counting the columns of source and testing data in each phase, etc.

ETL Test Automation Processes

The ETL test automation process is not as simple as when we do it without the involvement of data. In that scenario, we see the functionality and start writing test cases to check them. Here, functionality is not involved, but we need to ensure that the data is consistent and we are processing exactly what should be processed. Since we cannot see the data all the time in each phase, the process of ETL test automation becomes a bit long and organized. These processes are defined below.

Exploring and Gathering the Requirements

It is always expected from a good tester that they understand what they are testing and why that specific thing is built in the first place. These questions can be tracked back to business requirements and what the initial idea for the project was.

In the ETL process, we cannot directly define what we are working on because it is not visually represented like a website. Therefore, if a tester does not understand the requirements and the design of ETL, they are bound to make faulty test cases that can compromise the data quality.

Exploring the DATA Sources

Once you are all set with the business requirements, you need to understand the data sources and their working. An ETL process may have many data sources that transfer data in various formats. Testers need to be clear on which data source works and how test cases can be formed to verify whether each format is converted to the expected one for the transformation logic.

Work on the test cases

At this point, you have briefed yourselves on all the critical things required in the test cases. Hence, you can start creating test cases and designing them for optimum results.

Report the Bugs Encountered

In the previous step, you will encounter various bugs in different departments. Analyze those bugs and create a detailed report. Since this is not something like a website that we can show, the report must be as detailed as possible. The bug report should also contain reproducibility steps and various cases (or examples) where the bug is encountered.

Test Transformation Logic

The next phase tests the logic that we have applied in the transformation phase of ETL. Here, we need to check if our data is in the same schema as we expect. We should also tend to corner cases and how the ETL pipeline responds when such a case is encountered. The transformation phase is crucial in ETL; therefore, each logic should be checked thoroughly.

Test Loading Logic

The next phase in ETL test automation is to test the loading logic, i.e., the final stage of ETL. Here, we need to test whether the loading has happened according to the expected rules or not. These may include verifying whether the complete data that was required to be loaded has loaded or not. We can also check if there are any discrepancies in the loaded data and if there are default data or not.

Prepare a Final Report – Close Tests

Everything you have verified or discovered has to be reported in the final test closure report. Reports are a great way to analyze your work within minutes, and since it is the only document that will represent the results, they cannot afford any bugs or faults in it. Therefore, prepare this document carefully.

Put down all the requirements that you analyzed in the first step so that management can know whether you missed something or not. Missing one requirement means you have missed all the tests related to it. You can also put down the data that was fed into each phase and the data that came out of each. Take your time, design a report carefully, and work according to your organization’s report requirements.

Challenges of ETL Testing

ETL testing ensures the accuracy and quality of data processed through extraction, transformation, and loading. Challenges in ETL testing include large data volumes, complex data sources, data quality issues, and complex data transformations. A few more challenges of ETL Testing are as follows:

- Generating representative test data

- Maintaining and updating tests

- Setting up a separate test environment

- Integrating with testing tools

- Debugging and troubleshooting automated tests

- Developing reliable and comprehensive test scripts

- Executing tests

Test Automation in ETL and Where We Stand Today

ETL is a complex process, and designing tools for it has been a tough job to do. In development scenarios, ETL asks for automated data extraction, applying logic and loading it onto the target data warehouse. However, since this is a linear method, it is achievable using automation. On the other side, with ETL test automation, the story has been a bit different.

In the previous two sections, we got a gist of ETL test automation and the tasks we need to consider to achieve that. Let’s pick data quality testing from the ETL test types to understand it a bit more. Here, we consider many things, out of which two are: verifying error-free data and figuring out irrelevant data.

For these two, it is easier to ascertain how hard automation can be. For instance, how can we possibly automate the testing for verifying irrelevant data when the user has passed all the validation checks?

Working with data is extremely tough and unpredictable. Unlike mobile or web applications, the execution is in multiple directions. For example, for an input field that takes a name as the input, it would probably not matter whether the user puts the correct name or just random letters.

What matters is whether our web or mobile application is working with input validations or not. But when data quality comes into the picture, we add one more dimension where we need to be sure that even if this is a name field, it has to be a real name (this is just an example).

If we take the same example without automation, a human can easily analyze the string and tell whether it is a real name or random letters. If a tester has understood the requirements and logic behind the transformations, analyzing the data is easier manually but time-consuming.

Therefore, as of now, ETL test automation has had a slow start and growth. It has undoubtedly not covered each aspect but can be applied to reduce the overall time effect on the testing phase. Also, we see some tools coming up in the market to play their part in ETL test automation which gives us a positive note for the future.

Best ETL Test Automation Testing Tools

Here is a list of top ETL Test Automation Testing Tools for you:

Rightdata

RDt is a self-service ETL testing tool designed to help teams with the automation of testing data quality, assurance, and data QC processes.

Icedq

iCEDQ is a platform for Data Testing and Monitoring. It helps organizations automate ETL Testing, Cloud Data Migration Testing, and Data Monitoring.

Integrate.io

It is a data integration, ETL, and ELT tool. Integrate.io is a cloud-based platform that will simplify data processing by providing an intuitive graphic interface to implement an ETL, ELT, or replication solution.

Dataq

It is an AI-based ETL/ELT Testing tool and the first to support hybrid/multi-cloud. It can scale up and handle both structured and semi-structured data.

Big EVAL

BiG EVAL is a complete suite of software tools to leverage business data’s value by constantly validating and monitoring quality. It ensures automating the testing tasks during ETL and provides quality stats in production.

Wrapping it up!

Working with data is a mysterious and unpredictable journey for developers and testers. The huge possibilities with even a mediocre amount of data make us switch towards something structured in nature. This brings out the birth of ETL, i.e., extraction, transformation, and loading of the data.

ETL pipeline defines three stages by which data should pass through to make things easier at the end. With extraction, we carefully measure what to take and where to take it. In transformation, we apply some logic or rules to the extracted data and transform it into a format that our data warehouse and backend logic understands.

In loading, we load our transformed data to the target server. By the end of it, we will have structured and meaningful data that is not repetitive and can be used to generate revenue or other data mining stuff.

Throughout this post, we tried to brief you about ETL and its need in today’s world. While ETL automation in development is not a rare spectacle, it surely is when it comes to automated testing. Since the pipeline is complex and covering all the scenarios is extremely hard, ETL test automation is taking time to develop, which is quite evident from the current progress graph.

I hope this post enlightens you in the right direction and has helped you understand the complex nature of data and what we are doing to deal with it. For any queries, comments or suggestions, do let us know in the comment section and we will respond to you back. Thank you for giving this post your valuable time.

Frequently Asked Questions

1. What is ETL Test Automation?

Ans: ETL Test Automation is a methodology to test the ETL process by validating and comparing source data to destination data. This ensures that data is accurate and helps our organization trust that data.

2. Which Automation Tool is Best for ETL Testing?

Ans: There are a lot of tools available for ETL automation testing. A few of them are as follows:

- RightData

- iCEDQ

- DataQ

- Integrate.io

- BiG EVAL

3. Can We Use Selenium for ETL Testing?

Ans: You can use Selenium to Automate ETL testing, but there are more efficient ways to do ETL testing.