Prompt Templates for Pro-level test cases

Get prompt-engineered templates that turn requirements into structured test cases, edge cases, and negatives fast every time.

Table Of Contents

- 1 Overview

- 2 What is Configuration Testing?

- 3 Key Objectives of Configuration Testing

- 4 Why Configuration Testing Matters Today?

- 5 A Short Checklist to Common Configuration Components to Test

- 6 Types of Configuration Testing (With Real Examples)

- 7 Configuration Testing vs Compatibility Testing vs Cross-Browser Testing

- 8 Step-by-Step Framework to Run Configuration Testing

- 9 Configuration Testing Challenges & How to Overcome Them

- 10 Tools to Effectively Run Configuration Testing

- 11 Scale Your Configuration Testing with Testsigma

- 12 FAQs

Overview

What is configuration testing?

Testing software across different environment setups to ensure it works consistently for all users regardless of their device, browser, OS, or network conditions.

Why configuration testing matters today?

- Reduces environment-related support tickets

- Protects revenue from broken checkout flows

- Prevents user abandonment from compatibility issues

- Validates responsive design on real devices

- Ensures accessibility compliance

- Avoids reputation damage from platform-specific bugs

Types of configuration testing

- Single Configuration Testing

- Multi-Configuration Testing

- Compatibility-Focused Configuration Testing

- Network Configuration Testing

- Security Configuration Testing

When you ship code, you’re shipping it to thousands of different environments you’ll never see. Your users aren’t running the same setup you developed with. Some are stuck on Internet Explorer because of corporate policies while others use Firefox on Linux or Safari on five-year-old iPads. Each combination processes your code differently, and any one can break in ways your local environment won’t show. Configuration testing is how you catch those breaks before they reach production. Let’s break down what it actually means, how it works in practice, and how to get started without overwhelming your testing pipeline.

What is Configuration Testing?

Configuration testing verifies that your software functions correctly across different hardware, software, operating systems, browsers, devices, and network environments. It identifies compatibility issues that only appear under specific setups.

Your users access products from dozens of browsers, operating systems, and devices simultaneously. What works perfectly on Chrome 120 might break on Firefox 115 or Safari 17. A feature that renders beautifully on your 1920×1080 monitor could break on a 768px tablet or a 4K display.

Network conditions also vary. Your local fiber connection doesn’t reflect what users on corporate VPNs or mobile networks experience. So, features that load instantly for you might time out or fail for them.

Without testing across these variations, you’re pushing compatibility bugs straight to users.

Here’s when you actually need it:

- Multi-platform applications that run on web, mobile, and desktop require validation across each platform’s unique behaviors.

- Browser-based products need testing across Chrome, Firefox, Safari, and Edge, including their different version releases.

- Mobile apps must work across iOS and Android devices with varying screen sizes, OS versions, and manufacturer customizations.

- Enterprise software often supports legacy systems where customers run older browsers or operating systems that handle code differently.

- Global applications serving users across regions with different network speeds, latencies, and bandwidth limitations.

- Compliance-driven products where accessibility standards require functionality across specific configurations and assistive technologies.

Key Objectives of Configuration Testing

Beyond just checking if your app runs on different environments, configuration testing helps you to:

- Ensure cross-platform compatibility: Verify that features work consistently across Windows, macOS, Linux, iOS, and Android devices.

- Detect dependency failures: Identify when specific library versions, runtime environments, or system configurations cause unexpected breaks that don’t appear locally.

- Identify performance bottlenecks: Spot environment-specific slowdowns caused by hardware limitations, network conditions, or resource constraints that affect user experience.

- Verify real-world coverage: Test against actual user environments rather than idealized lab conditions to catch problems before your audience does.

Why Configuration Testing Matters Today?

Users expect your product to work flawlessly regardless of how they access it. One broken experience on their preferred browser or device and they’re gone, often without bothering to report the issue.

Here’s why configuration testing is important for your software:

- Reduces support costs by preventing environment-related tickets that eat up engineering time

- Protects revenue by ensuring checkout flows, payments, and critical features work across all user configurations

- Improves user retention since customers don’t abandon your product due to compatibility issues they encounter

- Validates responsive design across actual devices and screen sizes, not just browser resize tools

- Supports accessibility compliance by testing with assistive technologies and configurations required by regulations

A Short Checklist to Common Configuration Components to Test

Pick components that actually impact how your software behaves in production. Here’s a quick checklist that will help:

Hardware Configurations

- Processors to determine computational speed and performance under load

- GPUs for graphics rendering and video playback capabilities

- RAM variations to check memory-intensive operations and multitasking behavior

- Storage types (SSD vs HDD) to validate load times and data access patterns

- Device capabilities like GPS, cameras, or touch screens for feature availability

Software Configurations

- OS versions to catch platform-specific code execution differences

- Drivers to ensure peripheral devices function correctly

- Libraries and dependencies to verify version compatibility

- Runtime environments (Node.js, Python versions) to prevent breaking changes

- Security patches that might alter API behaviors

Browser & Device Configurations

- Browser engines (Blink, Gecko, WebKit) for rendering consistency

- Browser versions to validate newer API support

- Mobile devices across screen sizes and resolutions

- Touch interactions versus mouse events for input handling

- Device orientation changes for responsive layout behavior

Network & Infrastructure Configurations

- Bandwidth levels to test load times and streaming quality

- Latency conditions for real-time feature performance

- Firewalls that block specific ports or protocols

- Proxies that intercept and modify requests

- VPNs for geographic routing and IP address variations

- Cloud regions to verify infrastructure consistency

Types of Configuration Testing (with Real Examples)

Configuration testing is of different types targeting different setups and components. Here are the main types:

1. Single Configuration Testing

Tests your application in one specific environment to establish baseline functionality before expanding coverage.

Example: Your web app targets Chrome users on Windows 10 primarily. You validate all features on Chrome 120, Windows 10 Pro, with 8GB RAM and standard network conditions. This baseline confirms everything works before testing edge cases.

2. Multi-Configuration Testin

Testing happens across multiple devices, OS, browser, and network combinations simultaneously using a test matrix.

Example: An e-commerce checkout must work across Chrome, Firefox, Safari, and Edge on Windows and macOS. So, you build a matrix covering Chrome on Windows 10, Chrome on macOS, Firefox on Windows 11, Safari on macOS, and Edge on Windows 10. Each combination runs through the complete checkout flow.

3. Compatibility-Focused Configuration Testing

Verifies that all officially supported environments deliver consistent behavior without breaking core functionality.

Example: You have created a mobile app that supports iOS 15 through iOS 18 and Android 11 through Android 14. As such, you will check login, navigation, transactions, and biometric authentication across every supported OS version.

4. Network Configuration Testing

Validates application performance under different network infrastructure setups including proxies, firewalls, bandwidth limits, and connection types.

Example: Your video conferencing app needs to work behind corporate firewalls. You test with restrictive firewall rules blocking ports, proxy servers routing traffic, VPN connections adding latency, and throttled bandwidth simulating poor connections. This shows whether calls drop or video degrades gracefully.

5. Security Configuration Testing

Checks how your application handles different security settings including SSL versions, cipher suites, security headers, and certificate configurations.

Example: Your payment system must enforce TLS 1.2 or higher. Test connections using deprecated SSL 3.0 and TLS 1.0 to verify proper rejection. Plus, validate approved cipher suites work while weak ones fail, and confirm security headers like HSTS and CSP appear correctly across all environments.

Configuration Testing Vs Compatibility Testing Vs Cross-Browser Testing

| Aspect | Configuration Testing | Compatibility Testing | Cross-Browser Testing |

| Primary focus | Software behavior across hardware, OS, network, and environment setups | Software integration with different systems, devices, and third-party tools | Consistent behavior across browsers and versions |

| Scope | Infrastructure, hardware, network, application configs | Backward/forward compatibility, hardware/software integration | Browser engines and versions only |

| What It Tests | RAM, OS versions, network conditions, feature flags, database configs | Database types, external APIs, file formats, device integrations | Rendering engines, JavaScript, CSS, browser APIs |

| Example | Web app on Windows 10, 8GB RAM, behind corporate firewall | App working with MySQL and PostgreSQL, or Salesforce integration | Login form on Chrome, Firefox, Safari, Edge |

| When to Use | Before deploying to diverse production environments | Supporting multiple platforms or external integrations | Any browser-accessed web application |

Step-by-step Framework to Run Configuration Testing

Here’s how to implement configuration testing without drowning in endless test combinations.

Step 1: Identify User Environment Matrix

Start by understanding what configurations your actual users run:

- Check analytics tools for browser types, OS versions, and device categories accessing your product

- Review user agent strings in server logs to see exact browser and OS combinations

- Analyze customer segment data to identify patterns like enterprise users on legacy browsers

- Examine screen resolution data to understand device types and display sizes

- Monitor network type distribution (mobile, broadband, corporate) from connection logs

- Track error patterns tied to specific configurations in your error monitoring tools

- Survey high-value customers directly about their preferred environments

Step 2: Select Critical Configurations & Create a Coverage Matrix

You can’t test everything. Use these four factors to decide what configurations to test:

- Usage percentage: Prioritize configurations representing the majority of your traffic. If 70% of users access your app via Chrome on Windows, that configuration demands thorough testing.

- Business impact: Some user segments generate more revenue than others. Test configurations used by enterprise customers and premium subscribers before free-tier environments.

- Failure history: Review past bug reports and support tickets to identify configurations that consistently surface issues. These environments need regular testing to prevent repeat failures.

- Device/browser fragmentation: When users spread across many variations, select representative samples from each major category. Think of key browser engines and popular device models instead of testing every single version.

Step 3: Create High-Value Test Scenarios

Identify test scenarios that cover authentication flows, core transactions, and data-heavy features. These represent critical user journeys where configuration differences cause the most impact.

Create a configuration matrix template documenting which scenarios run on which configurations. It should include columns for test case name, priority level, target configurations, expected behavior, and actual results.

Step 4: Execute Tests (manual + Automated)

manual testing approach works well for exploratory scenarios and visual validation. Testers physically use different devices to catch layout issues, touch interaction problems, and rendering inconsistencies that screenshots miss.

Automation handles repetitive configuration checks efficiently. You can run tests simultaneously across multiple browser and device combinations easily with tools like Testsigma without any coding knowledge.

The best you can do is combine both approaches strategically. Automate high-frequency regression tests that verify core functionality across configurations while using manual testing for new features, visual design validation, and user experience edge cases.

Step 5: Log, Analyze & Prioritize Failures

Document every configuration-specific failure with complete environment details. Note down the exact browser version, operating system, device model, screen resolution, and network conditions when the issue occurred.

Some of the common configuration failures to look for:

CSS Rendering Differences

- Flexbox layouts breaking on older Safari versions

- Z-index stacking inconsistencies across browsers

- Custom fonts failing on specific Android devices

JavaScript Compatibility Issues

- ES6 features crashing without polyfills

- Chrome API methods missing in Firefox

- Touch events differing from mouse events

Network-Dependent Failures

- Request timeouts on slow connections

- File upload failures behind firewalls

- WebSocket drops with proxy interception

Resolution-Specific Problems

- Buttons disappearing on small screens

- Modal overflow on tablets

- Blurry images on high DPI displays

Step 6: Integrate into CI/CD

Add configuration tests to your deployment pipeline. So that you can run these on every pull request or before each release to catch compatibility issues early.

Next, set up parallel execution to get fast feedback and define pass/fail thresholds based on priority. Your critical configurations require zero failures whereas lower-priority setups can allow known issues that don’t block deployment.

Finally, monitor results over time to identify problem environments. Use this data to refine your test matrix and catch issues earlier.

Configuration Testing Challenges & How to Overcome Them

Testing across different configurations can create chaos pretty quickly. Here are the biggest problems you’ll face and how to handle them.

1. Exponential Growth of Test Combinations

When you start testing every possible configuration, you end up with thousands of test scenarios. The number of combinations grows faster than any team can realistically manage.

Solution: Use pairwise testing to cover interactions between two parameters at a time. This approach reduces your test cases by 70-90% while still catching most configuration-related bugs.

2. Maintaining Test Environments

Keeping multiple environments synchronized takes constant work, and they drift out of sync over time. When this happens, your tests start failing for reasons unrelated to actual bugs.

Solution: Use containerization or virtualization to create environments that stay consistent and reproducible. Automate your environment provisioning process to eliminate manual setup errors.

3. Flaky Tests across Configurations

Tests that pass on one setup suddenly fail on another without any clear explanation. Tracking down the root cause eats up debugging time you don’t have.

Solution: Isolate environment-specific variables in your test data and keep them separate from test logic. Log complete configuration details with every test run so you can spot patterns in the failures.

4. Resource Constraints

Running tests across dozens of configurations on cloud platforms or physical devices can drain your budget quickly.

Solution: Prioritize configurations based on actual user analytics from your production environment. Test the top 80% of user configurations thoroughly and run spot checks on the rest.

Tools to Effectively Run Configuration Testing

| Tool | Best for | Configuration Coverage | Key Strength | Limitation |

| Testsigma | Teams wanting codeless automation across web, mobile, and API | 3000+ browser/device/OS combinations on real devices | Plain English test creation with AI-powered self-healing reduces maintenance | Newer platform with smaller community |

| BrowserStack | Live and automated testing on real browsers and devices | 3000+ browser/device combinations with real mobile devices | Instant access to real devices without infrastructure setup | Costs scale up quickly with parallel test execution |

| Selenium Grid | Teams with in-house infrastructure and coding expertise | Custom browser/OS grids you configure yourself | Free and fully customizable for specific configuration needs | Requires manual setup, maintenance, and infrastructure management |

| Playwright + Cloud Farms | Modern web testing with multi-browser automation | Chromium, Firefox, WebKit plus mobile via cloud integrations | Fast execution with built-in wait mechanisms and parallel testing | Requires coding skills and separate cloud service for mobile testing |

| AWS Device Farm | Mobile-first testing integrated with AWS infrastructure | Real Android and iOS devices in AWS cloud | Pay-per-use pricing and seamless AWS ecosystem integration | Limited to mobile testing, less browser configuration support |

Scale Your Configuration Testing with Testsigma

Testing configurations manually doesn’t work when you need to support dozens of browsers and devices. You can’t realistically test every browser version, device type, and operating system combination your users rely on without automation backing you up.

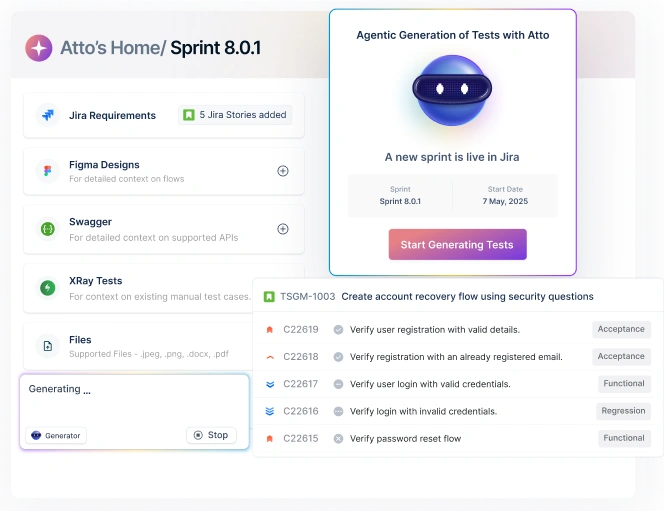

Testsigma low-code automated platform makes this manageable by letting you create tests in plain English across 3000+ real browsers and devices.

You don’t need to manage complex infrastructure to get comprehensive coverage. The platform’s AI-powered self-healing adapts when configurations change, so you spend less time fixing broken tests and more time catching actual bugs.

Whether you’re testing web apps, mobile applications, or APIs, you get consistent results across every environment that matters to your users.

FAQs

Teams should prioritize configurations used by the largest user base and those prone to defects or compatibility problems.

Configuration testing typically finds issues related to UI rendering, dependency mismatches, permission handling, and environment-specific failures.

In cloud environments, it focuses on varying infrastructure settings like browsers, regions, permissions, and scalability conditions rather than fixed hardware.