Prompt Templates for Pro-level test cases

Get prompt-engineered templates that turn requirements into structured test cases, edge cases, and negatives fast every time.

Table Of Contents

- 1 Overview

- 2 What is Chatbot Testing?

- 3 Benefits of Chatbot Testing

- 4 8 Types of Chatbot Testing

- 5 Step-by-Step Process to Get Started With Chatbot Testing

- 5.1 Step 1: Define Conversational Goals & User Personas

- 5.2 Step 2: Map Intents & Story Flow

- 5.3 Step 3: Create Test Data Variants (Misspellings, Slang, Edge Cases)

- 5.4 Step 4: Manually Test Core Flows

- 5.5 Step 5: Automate Repetitive Tests

- 5.6 Step 6: Regression Testing After Model Updates

- 5.7 Step 7: Continuous Monitoring in Production

- 6 Common Challenges in Chatbot Testing and How to Handle Them?

- 7 Chatbot Testing for LLM-based Chatbots

- 8 Automation for Chatbot Testing

- 9 Compare Chatbot Testing Tools

- 10 Future of Chatbot Testing (AI Agents, Multi-Modal, Autonomous Testing)

- 11 Build High-Quality Chatbots Faster With Automated Testing

Overview

What is Chatbot testing?

Chatbot testing finds bugs in how bots understand messages and generate responses.

What are the types of chatbot testing?

- Functional testing

- Conversational flow testing

- NLP/NLU testing

- AI model quality testing

- User experience testing

- Performance and load testing

- Security and privacy testing

- Accessibility testing

How do you automate chatbot testing?

Automate intent validation, entity extraction, multilingual testing, regression checks after deployments, and API integrations to handle repetitive validation work efficiently.

Your chatbot just told a frustrated customer looking for account help to “contact support” for the third time in the same conversation. And that interaction gets screenshotted, shared on social media, and your automation investment becomes a PR problem.

Chatbot testing catches these failures before they become a major problem. It validates conversation flows, intent recognition, and response accuracy across everything from basic NLP to complex multi-turn dialogues.

Let’s see how chatbot testing actually works, including its different types, step-by-step process, and how to automate the whole process.

What is Chatbot Testing?

Chatbot testing checks whether your conversational AI understands user inputs correctly, responds accurately, and handles conversations without breaking. It validates intent recognition, dialogue flow, integration points, and edge cases that could harm user interactions.

Think of it like testing a customer service rep before they go get into work. You ask questions in different ways, throw curveballs, and see if they can answer helpfully or stay confused.

For example, if a user asks “I want to return my order,” your chatbot should recognize the return intent, ask for order details, and guide them through the process. Chatbot QA process helps verify that it does exactly that instead of responding with “I don’t understand” or sending them in circles.

Benefits of Chatbot Testing

Chatbots handle millions of customer interactions daily, from answering simple FAQs to processing transactions worth thousands of dollars.

Your users expect instant, accurate responses regardless of how they phrase their questions. If you fail to provide that, you lose not just users but also your revenue.

Here’s why proper testing matters in chatbot QA:

1. Protects Revenue and Conversions

Poor chatbot experiences drive customers away at critical decision points. When a bot fails during checkout or can’t answer product questions, users abandon their purchase and move to competitors who get it right.

2. Reduces Support Escalations

Broken conversation flows push users to contact human agents for issues bots should handle. This defeats the automation purpose and increases support costs instead of reducing them.

3. Maintains Brand Reputation

Users judge your entire brand based on bot interactions. A chatbot that misunderstands requests or provides wrong information damages trust that takes months to rebuild.

4. Handles Real-World Complexity

People don’t speak in neat, predictable patterns. They use slang, make typos, switch topics mid-conversation, and ask questions in unexpected ways. Testing takes these scenarios into account to create a bot that shares the correct response irrespective of the query.

5. Ensures Cross-Platform Consistency

Your chatbot might work perfectly on your website but fail on mobile apps or messaging platforms. Testing across channels catches platform-specific bugs that affect different user segments.

6. Meets Compliance Requirements

Chatbots collect sensitive user data during conversations, from payment details to health information. With testing, you can ensure your bot handles this data securely and meets regulations like GDPR, PCI-DSS, and SOC2 before auditors find violations.

8 Types of Chatbot Testing

A chatbot has different aspects that require different testing approaches. You can’t catch intent recognition failures with the same methods you use for load testing. Each testing type targets specific failure points that could break user experiences in different ways.

1. Functional Testing

Validates whether your chatbot performs its core functions correctly. This includes checking if it recognizes user intents, retrieves accurate information from databases, and executes actions like booking appointments or processing refunds. It’s your baseline check that the bot does what it’s built to do.

2. Conversational Flow Testing

Examines multi-turn dialogues where context matters across multiple exchanges. It ensures your bot remembers what users said earlier, handles topic switches smoothly, and maintains reasonable conversations. A bot that forgets the user mentioned their order number three messages ago creates frustrating loops.

3. NLP/NLU Testing

Tests how well your chatbot understands natural language variations. Users ask the same question dozens of different ways, and your bot needs to recognize them all. This testing verifies that “I need a refund,” “Can I get my money back?” and “How do I return this?” all trigger the correct intent.

4. AI Model Quality Testing (Llm-specific)

Evaluates the accuracy and reliability of large language models that powers your chatbot. It tests for hallucinations where the bot invents information, checking response relevance, and validating that model outputs align with your brand voice and policies.

5. User Experience (UX) Testing

Evaluates whether real users can accomplish tasks easily through your chatbot. UX testing focuses on conversation clarity, response helpfulness, and overall user satisfaction rather than pure technical functionality.

6. Performance and Load Testing

Measures how your chatbot handles high traffic volumes without slowing down or crashing. During product launches or promotional campaigns, conversation volumes spike dramatically. Performance testing ensures your bot stays responsive when hundreds of users message simultaneously.

7. Security and Privacy Testing

Identifies vulnerabilities in how your chatbot handles sensitive data and prevents malicious attacks. It tests for SQL injection attempts, unauthorized data access, and proper encryption of user information during conversations.

8. Accessibility Testing (wcag/voice Bots)

Ensures your chatbot works for users with disabilities across different interaction modes. This includes screen reader compatibility, keyboard navigation support, and proper handling of voice commands for voice-enabled bots.

Testing against WCAG standards helps you reach broader audiences while meeting legal requirements.

Step-by-step Process to Get Started with Chatbot Testing

Here’s a step-by-step process for thoroughly testing your chatbot, covering both planned scenarios and the unpredictable ways real users interact.

Step 1: Define Conversational Goals & User Personas

Start by identifying what your chatbot needs to accomplish and who will use it. A customer support bot has different requirements than a sales qualification bot or an HR assistant.

So, write down specific goals, such as “reduce support ticket volume by 40%” or “qualify leads before routing to sales.” Then create user personas that represent your actual audience. A tech-savvy millennial interacts differently from a baby boomer unfamiliar with chatbots.

Step 2: Map Intents & Story Flow

List every intent your chatbot should recognize and map how conversations should progress. An e-commerce bot might handle intents like order tracking, returns, product questions, and checkout assistance.

For each intent, list down the expected conversation flow:

- What information does the bot need to collect?

- In what order should it ask questions?

- What happens if users provide partial information?

- Where can users switch topics or go back?

- How does the conversation end successfully?

Step 3: Create Test DATA Variants (misspellings, Slang, Edge Cases)

Real users don’t write perfect requests. They make typos, use abbreviations, mix languages, and phrase things in unexpected ways. Your test data needs to reflect this reality.

For each intent, create variations that include:

- Common misspellings (“refund” vs “refumd” vs “refind”)

- Slang and informal language (“wanna return this” vs “I would like to initiate a return”)

- Different sentence structures (questions, commands, statements)

- Extreme inputs (very long messages, single words, special characters)

- Ambiguous requests that could map to multiple intents

Step 4: Manually Test Core Flows

Before automating anything, walk through your most critical conversation paths manually. This hands-on testing catches obvious issues and helps you understand how the bot actually behaves.

Test happy paths where everything goes right, then deliberately break things. Enter wrong information, skip required fields, switch topics mid-conversation, and see what happens. Remember to note any confusing responses, infinite loops, or crashes.

Pay special attention to error handling. Does the bot recover gracefully when it doesn’t understand something, or does it get stuck repeating “I didn’t get that?”

Step 5: Automate Repetitive Tests

Once your core flows work manually, automate tests that need frequent execution. This includes regression checks, intent recognition validation, and conversation flow verification across different channels.

Automated chatbot testing handles the repetitive work that would take hours manually. You can test hundreds of conversation variations overnight and review failures the next morning.

Use automation for stable workflows that rarely change and high-risk scenarios that could break with updates.

Step 6: Regression Testing after Model Updates

Every time you retrain your NLP model, add new intents, or update conversation logic, run regression tests to verify nothing broke.

Set up regression suites that run after each deployment. Compare results against baseline metrics to catch performance drops immediately. A 10% decrease in intent recognition accuracy might not seem significant until it affects thousands of daily conversations.

Step 7: Continuous Monitoring in Production

Testing doesn’t stop at deployment. Monitor live conversations to catch issues automated tests miss. Track metrics like containment rate, conversation completion rate, and user satisfaction scores.

Check unrecognized intents regularly to spot gaps in your training data. Look at conversations where users asked for human agents to understand what your bot couldn’t handle. Use these insights to improve both your test coverage and bot performance.

Common Challenges in Chatbot Testing and How to Handle Them?

Chatbot testing comes with many difficulties, such as conversations branching unpredictably, responses vary each time, and measuring success involves subjective judgment.

Here’s how to tackle the main obstacles:

1. Defining Expected Behavior for Open-Ended Responses

Challenge: LLM-powered chatbots generate unique responses each time. You can’t write tests expecting exact text matches when outputs vary with every interaction.

Solution: Test against quality criteria instead of exact text. Validate that responses contain required information and maintain appropriate tone. Use semantic similarity scoring to check if responses align with acceptable patterns.

2. Managing Test DATA Quality, Bias, and Toxicity

Challenge: Biased training data can cause bots to generate harmful or discriminatory responses if not tested properly.

Solution: Check your test datasets for demographic representation and language diversity. Run dedicated bias tests that probe for problematic outputs around sensitive topics. Use automated tools to flag harmful language patterns before deployment.

3. Scaling Test Coverage Efficiently

Challenge: Testing every possible conversation variation manually is impossible because the combinations grow too fast. Every new intent or language you add creates more gaps in your test coverage.

Solution: Use LLMs to automatically generate scenario variations from base test cases. These tools create hundreds of realistic conversation branches and phrasing alternatives quickly, letting you focus manual effort on critical flows.

4. Reproducing and Tracking Issues

Challenge: Users report issues without providing conversation history, making bugs hard to reproduce. Plus, frequent model updates makes tracking what changed between versions messy.

Solution: Implement logging that captures full conversation context with unique IDs. Treat conversation flows like code with version control that documents changes to intents and training data. This lets you replay exact sessions and track regression risks.

Chatbot Testing for LLM-Based Chatbots

LLM-powered chatbots behave differently than rule-based bots. They generate responses dynamically, creating new testing challenges around safety, consistency, and preventing misuse. As such, they require different kind of testing approach:

1. Prompt Testing

Validates whether your prompts consistently produce desired outputs across different user inputs. Even minor prompt tweaks can completely change how your bot responds, so test multiple variations before going live.

2. Guardrail Testing

Check that safety boundaries stop your bot from discussing banned topics or giving off-brand responses. Ask forbidden questions deliberately to confirm the bot refuses properly.

3. Safety Layer Validation

Verify content filters catch harmful outputs before users see them. Test edge cases where problematic content might slip through, and confirm filters work across languages without blocking normal conversations.

4. Jailbreak Attempts

Test if users can trick your bot into ignoring instructions or revealing sensitive data. Run adversarial tests using prompt injection or role-playing scenarios to find exploitable weaknesses.

Automation for Chatbot Testing

Manual chatbot testing works fine for initial validation, but it quickly becomes unsustainable. Running thousands of conversation variations across multiple channels takes weeks, and regression testing after every model update consumes a lot of resources.

Automation easily solves this problem by handling repetitive validation tasks continuously. It catches regressions immediately after deployments and frees your team to focus on exploratory testing and complex scenarios that actually need human judgment.

What to Automate in Chatbot Testing

- Intent Validation: Verify your bot recognizes user intents correctly across different phrasing patterns and input variations.

- Entity Extraction: Test whether your bot accurately extracts key information like dates, names, order numbers, and locations from user messages.

- Multilingual Testing: Execute identical test scenarios across all supported languages to ensure consistent translation quality and intent recognition.

- Regression Testing: Run full test suites after every deployment to verify existing functionality still works without breaking.

- API Integration Checks: Validate that backend connections to CRMs, payment systems, and databases respond correctly.

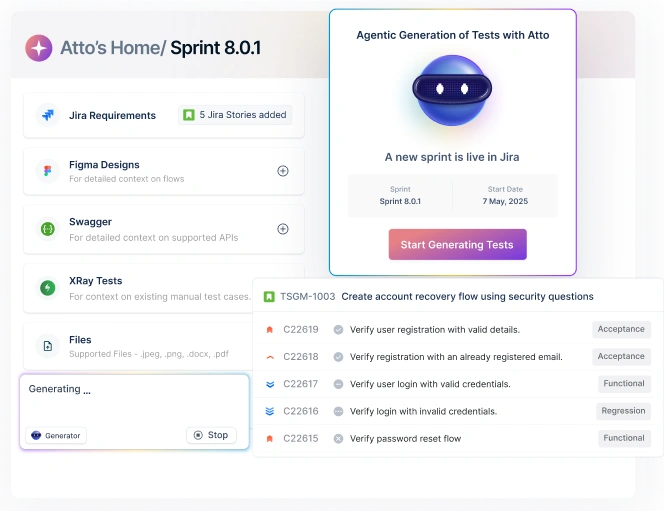

How Testsigma Automates Chatbot Testing

Testsigma no-code automation platform eliminates the need of coding, allowing QA teams to build comprehensive tests without depending on developers for script maintenance. It comes with the following benefits:

- NLP Test Inputs: Generate natural language variations automatically to test different phrasing patterns.

- Data-Driven Tests: Run identical flows with different datasets to test edge cases across hundreds of combinations.

- Reusable Test Steps: Build conversation components once and reuse them across test cases with automatic updates.

- Cloud Execution: Run tests in parallel across environments without maintaining infrastructure.

- Detailed Reports: Get clear visibility into failures with reports highlighting intent accuracy and response times.

Compare Chatbot Testing Tools

Choosing the right tool depends on your specific testing needs, team expertise, and chatbot architecture. Here’s how leading tools compare across key capabilities.

| Tool | Best for | NLP Support | LLM Testing | CI/CD Integration | Voice Testing | Error Logging |

| Testsigma | End-to-end automation with codeless approach | ✅ | ✅ | ✅ | ✅ | Detailed |

| BrowserStack | Cross-browser and device testing | Limited | ❌ | ✅ | ✅ | Basic |

| Functionize | AI-driven test maintenance | ✅ | ✅ | ✅ | Limited | Advanced |

| Botium | Chatbot-specific testing frameworks | ✅ | Limited | ✅ | ✅ | Basic |

| TestMyBot | Unit testing for chatbot logic | ✅ | ❌ | ✅ | ❌ | Basic |

Future of Chatbot Testing (ai Agents, Multi-Modal, Autonomous Testing)

Chatbots now book appointments, process refunds, and analyze images while maintaining conversations. Testing a Q&A bot is straightforward, but validating a bot that interprets a photo of a broken product and automatically processes a warranty claim requires entirely different strategies.

- AI Agent Testing: Bots now execute multi-step workflows autonomously. You need to test whether the bot correctly books a flight, updates your calendar, and sends confirmation emails – not just whether it understood “book me a flight to Boston.”

- Multi-Modal Testing: Users send voice messages with background noise, upload blurry product photos, or type while talking. Tests must verify the bot handles messy real-world inputs across formats, not just clean text.

- Autonomous Testing: Production conversations generate thousands of edge cases daily. Your testing systems need to automatically create tests from these patterns, catching failures your manual test cases never anticipated.

- Context-Aware Validation: Bots must recognize when users are frustrated and adjust tone accordingly. Testing needs to verify the emotional intelligence of the bot and not just whether it retrieved the right data.

Build High-Quality Chatbots Faster with Automated Testing

When users phrase the same question 100 different ways, your bot needs to understand them all. Testing that manually means writing thousands of test cases for every intent, then repeating the process after each model update. That’s quite a lot.

Automation can handle this repetitive heavy lifting. It can assess intent accuracy across thousands of input variations, run regression tests after deployments, and track conversation quality in production.With Testsigma, automate with minimal effort. It’s plain English testing lets you build tests easily, covering NLP validation, API integrations, and multi-channel conversations. AI-powered agents handle execution automatically, helping you test efficiently and ship reliable chatbots faster.