Table Of Contents

- 1 Overview

- 2 What is a Test Case?

- 3 Importance of Test Case

- 4 Types of Test Cases

- 5 Structure of a Test Case: Parameters and Template

- 6 How to Write Test Cases?

- 7 Example of a Test Case

- 8 Common Mistakes to Avoid

- 9 Manual vs Automated Test Cases

- 10 Best Tools for Creating and Managing Test Cases

- 11 Best Practices for Effective Test Cases

- 12 How to Write and Manage Test Cases Using Test Management by Testsigma?

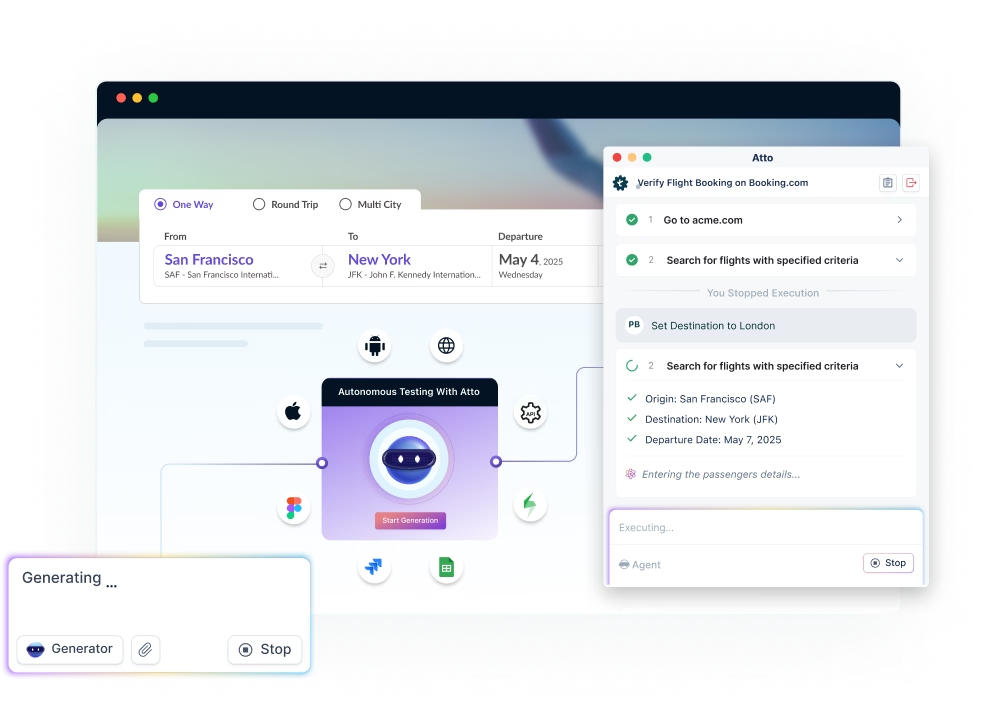

- 12.1 Steps to Create & Execute Test Cases with Atto & the Agents:

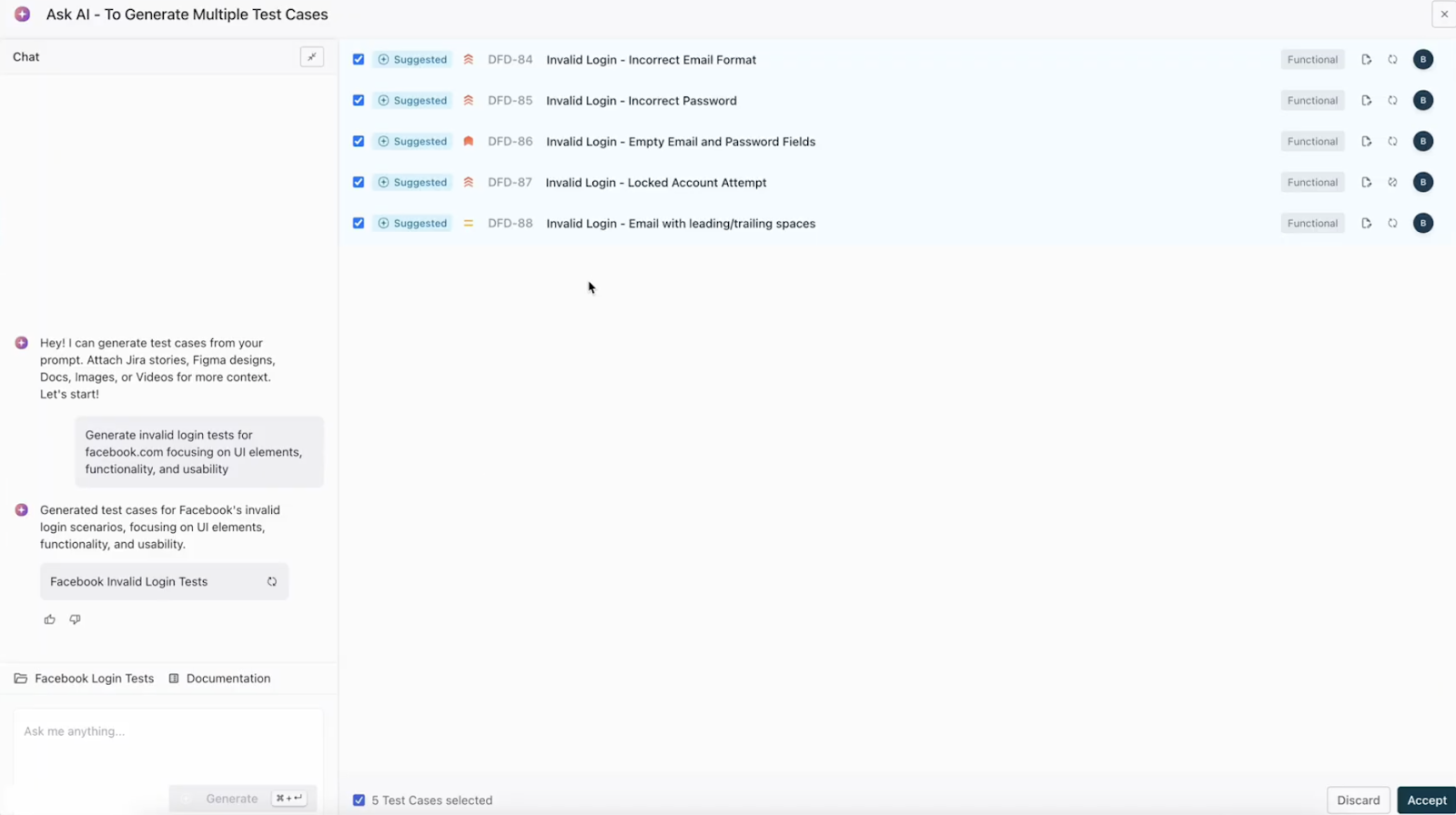

- 12.2 1. Generate Test Cases with AI

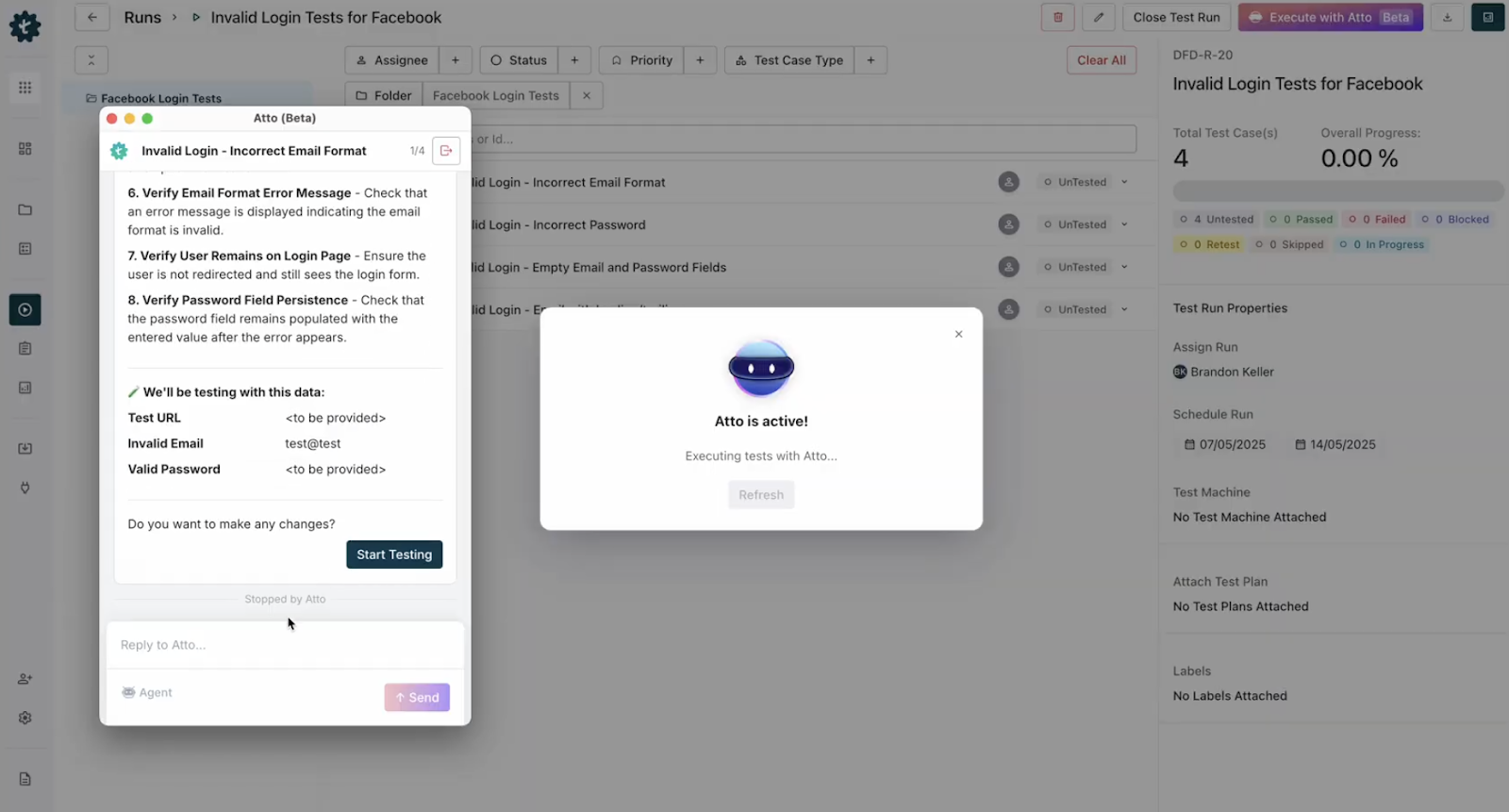

- 12.3 2. Create a Test Run

- 12.4 3. Execute with Agentic AI

- 12.5 4. Review the Execution Plan

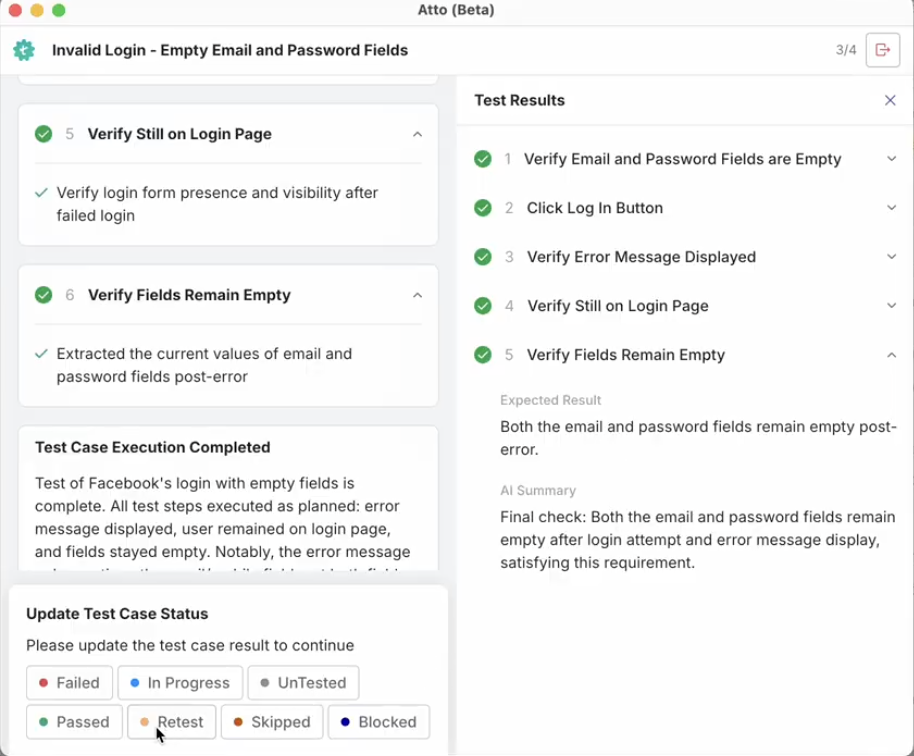

- 12.6 5. Review and Update Status

- 12.7 6. Continue Through the Test Run

- 12.8 7. View Detailed Results and Report Bugs

- 12.9 9. Complete the Test Run

- 13 Conclusion

- 14 FAQ

Overview

Definition of Test Case:

A test case is a set of clear steps, inputs, and expected results used to verify that a specific feature or behavior in software works correctly.

What Are the Different Types of Test Cases?

- Functionality test case: checks whether a feature works as expected.

- UI test case: validates layout, design, and visual elements.

- Performance test case: measures speed, stability, and responsiveness.

- Integration test case: checks how different modules work together.

- Usability test case: evaluates how easy and intuitive the system is for users.

- Database test case: verifies data storage, retrieval, and consistency.

- Security test case: checks vulnerabilities, access control, and data protection.

- User acceptance test case: ensures the software meets business expectations.

How to Write a Test Case?

Keep the test case simple, clear, and focused on one objective. Describe the steps, test data, and expected results in a way that any tester can follow. Add prerequisites, priorities, and environment details to make execution accurate and repeatable.

A test case is one of the simplest yet strongest tools to maintain software quality. This blog explains the meaning of test case, why test cases in software testing matter, different types, structure, examples, mistakes to avoid, tools, and how to write better test cases.

What is a Test Case?

A test case is a clear set of steps, inputs, and expected results used to verify that a software feature works as intended. Test cases in software testing ensure each requirement is validated in a repeatable and trackable way. They help testers check functionality, confirm user flows, and prevent defects from reaching users.

Importance of Test Case

- Clear Validation of Requirements

A test case helps confirm that the software meets documented requirements and behaves as expected.

- Improved Test Coverage

A structured set of test cases ensures every feature and scenario is tested properly.

- Faster Defect Detection

Test cases guide testers to spot issues early by defining exact expected results.

- Better Collaboration

Any team member can understand the steps, test data, and outcomes, which improves communication.

- Ease of Reuse

Good test cases can be reused across sprints, releases, and regression cycles.

- Consistency in Software Testing

Test cases reduce errors caused by guesswork and maintain uniform testing across teams.

Types of Test Cases

1) Functionality Test Case

Functional test cases validate features against expected behavior. These test cases in software testing check what the system should do under specific conditions, using defined inputs and expected outputs.

Example: Test a login page by entering a correct username and password to confirm the user is redirected to the dashboard.

2) User Interface Test Case

UI test cases check visual consistency, layout, colors, spacing, alignment, and responsiveness. They ensure the interface looks clean and behaves predictably.

Example: Verify that the “Add to Cart” button on a product page is visible, clickable, and aligned with text on desktop and mobile.

3) Performance Test Case

Performance test cases measure speed, responsiveness, and stability under load. They check how the system performs with different user volumes or data sizes.

Example: Check if the homepage loads within 3 seconds when 1000 users access it at the same time.

4) Integration Test Case

Integration test cases validate data flow and interactions between modules, APIs, or third party services. They ensure the system works as a unified whole.

Example: Add an item to a shopping cart and verify that the cart module updates correctly and syncs with the checkout module.

5) Usability Test Case

Usability test cases focus on ease of use, clarity, navigation flow, and overall user experience.

Example: Verify if a first time user can create an account within 2 minutes without guidance.

6) Database Test Case

These test cases ensure correct data storage, retrieval, modification, and deletion. They also validate constraints and data integrity.

Example: Check if a new user record is stored in the database after sign up and confirm the correct values in each column.

7) Security Test Case

Security test cases detect vulnerabilities and ensure proper access control, authentication, and data protection.

Example: Attempt to access an admin page without login and verify that the system blocks access.

8) User Acceptance Test Case

UAT test cases validate that the software meets business needs and works for real user workflows.

Example: A customer browses a product, adds it to the cart, applies a coupon, pays, and receives confirmation. The entire flow should work smoothly.

Structure of a Test Case: Parameters and Template

A standard test case format generally includes:

- Test Case ID

- Test Scenario

- Test Steps

- Prerequisites

- Test Data

- Expected or Intended Results

- Actual Results

- Test Status Pass or Fail

While writing test cases, include:

- A simple description of the requirement

- A clear explanation of the test process

- Setup details like software version, data points, operating system, hardware, time, prerequisites

- Any supporting documents

- Alternative conditions, if applicable

Test case prioritization is important because running every test case in a large suite can be time consuming. As features grow, it becomes harder to test everything during every release. Prioritizing test cases helps teams focus on the most critical areas first.

How to Write Test Cases?

- Understand the requirement: Read the user story, requirement document, or design specification until you can explain the feature in one sentence. A test case must map to a requirement.

- Identify test scenarios: Break the requirement into testable scenarios. Each scenario becomes one or more test cases. Example scenario: valid login, invalid login, password reset.

- Choose a clear test case ID and title: Use a consistent naming scheme, for example TC001_Login_Valid or AUTH-TC-001. The title should summarize the test case in plain language.

- Write the objective or test scenario: One short line that explains what the test case validates. Example: Verify that a registered user can log in successfully.

- List prerequisites: Note any setup needed before running the test case, such as user accounts, configuration, test data, or environment versions.

- Define test data: Specify exact data to use: username, password, product IDs, boundary values. Keep data reusable and documented.

- Write precise test steps: Number each step and use simple action verbs. Each step should be repeatable by anyone.

Example:

1. Open the login page.

2. Enter username.

3. Enter password.

4. Click Login. - State the expected result for each step or for the test: Be explicit about what success looks like. Example: After clicking Login, user is redirected to the dashboard and sees the welcome message.

- Include postconditions or cleanup steps: If the test changes data, tell the tester how to reset the environment. Example: Delete the test user or log out.

- Assign priority and estimate: Mark test case as High, Medium, or Low priority and estimate execution time. Prioritization helps planning and regression selection.

- Add traceability information: Link the test case to requirement IDs, user stories, or tickets so you can track coverage.

- Review and peer check: Have another tester or product owner review the test case for clarity, completeness, and accuracy.

- Tag automation potential: Note whether the test case is suitable for automation and any special automation notes like stable locators or environment access.

- Store in the test management tool: Save the test case in your chosen tool or repository with version control and access permissions.

- Maintain and update: Update test cases when requirements or UI change. Mark obsolete cases and add new ones for new features.

Example of a Test Case

Test Case for Login Page

| Field | Details |

| Test Case ID | TC001 |

| Test Scenario | Validate successful login for a registered user |

| Test Steps | 1. Open the login page. 2. Enter a valid username. 3. Enter a valid password. 4. Click Login. |

| Prerequisites | User must have a registered account |

| Test Data | Valid username and password |

| Expected Results | User should be redirected to the dashboard |

| Actual Results | As expected |

| Status | Pass |

Common Mistakes to Avoid

- Writing test cases without expected results.

- Using vague or incomplete steps.

- Skipping prerequisites.

- Not updating test cases after product changes.

- Ignoring negative or edge case scenarios.

Manual Vs Automated Test Cases

| Factor | Manual Test Cases | Automated Test Cases |

| Execution Speed | Slow | Fast |

| Best For | Exploratory and usability tests | Regression, repetitive flows, data driven tests |

| Accuracy | Can vary | Very high |

| Maintenance | Low effort | Needs updates as UI changes |

| Cost | Lower initial cost | Higher initial setup but lower long term cost |

| Use Cases | One time checks | Continuous testing and CI CD pipelines |

Best Tools for Creating and Managing Test Cases

| Feature | Testsigma | Management | Xray | Zephyr |

| Integrations | 2-way sync with Jira and wide integrations with CI/CD, automation frameworks, Slack, Git tools | Integrates with Jira, CI/CD pipelines, APIs, automation frameworks | Deep integration with Jira and CI tools, supports automation frameworks | Jira native integrations and supports integration with automation tools |

| Automation Support | Strong support with no-code automation, AI suggestions, auto-healing, test generation | Supports automation execution, CI/CD based automated runs | Supports automated tests like Selenium, JUnit, BDD, CI integrations | Supports automation with Selenium, JUnit, and CI tools |

| Reporting and Analytics | Advanced dashboards, coverage insights, trends, AI-powered insights | Clear dashboards, coverage metrics, trend analysis, real-time reporting | Strong coverage and traceability reports, customizable charts | Execution dashboards, progress reports inside Jira |

| User Experience | Simple UI, natural language editor, AI assistance, intuitive navigation | Clean web-based UI, modern layout, fast navigation | Jira-native UI, familiar to Jira users | Jira-native UI, traditional but functional |

| Traceability | End to end traceability across requirements, test cases, defects, and runs | Strong traceability between requirements, test cases, and defects | Very strong traceability within Jira across issues, requirements, defects | Traceability through Jira issue linking |

| Collaboration | Real-time collaboration, comments, shared workspaces, review workflows | Team dashboards, comments, notifications | Collaboration through Jira workflow, comments, assignments | Collaboration through Jira boards and issue workflows |

| Customization and Flexibility | Custom fields, templates, roles, flexible test case structures | Custom fields, reusable templates, flexible planning | Highly customizable via Jira workflows and fields | Uses Jira’s customization and field options |

| Scalability | Scales across large teams, automation-heavy projects, parallel runs | Designed for large-scale teams, supports heavy test loads | Scales based on Jira setup, suitable for mid to large teams | Scales with Jira instance, suited for growing teams |

| Centralized Asset Management | Unified repository for manual, exploratory, automated tests and data | Central test repository with shared assets | Centralized test assets as Jira issues | Centralized inside Jira test project assets |

| Role-Based Access Control | Custom roles, fine-grained permissions, project-based access | Role-based permissions for manager, editor, viewer | Controlled via Jira permission schemes | Uses Jira permission and role model |

| Agentic AI Support | Strong agentic AI with agents for test creation, sprint planning, execution, and bug reporting | AI for test case generation, test selection, cleanup | Limited AI, mostly none for agentic actions | No native agentic AI support |

Also Read: AI in Quality Assurance

Best Practices for Effective Test Cases

- Write simple steps that anyone can follow.

- Use real user scenarios whenever possible.

- Add clear expected results.

- Keep test data documented and reusable.

- Review and update test cases regularly.

How to Write and Manage Test Cases Using Test Management by Testsigma?

Test Management by Testsigma is a modern, agentic AI-driven test management platform that helps teams plan, track, and manage all their testing in one place. It unifies manual, automated, and exploratory testing and simplifies daily QA work with AI agents, including an AI coworker called Atto that assists with test creation, execution, and reporting. With clear dashboards, intuitive workflows, and two-way Jira integration, teams can see requirements, test runs, and defects in one view without jumping between tools. It is designed for fast-moving agile teams that want a smarter and more efficient way to handle testing.

Steps to Create & Execute Test Cases with Atto & the Agents:

1. Generate Test Cases with AI

- From the Testsigma Test Management dashboard, open the Test Cases section.

- Create a new folder to keep your work organized.

- Click Ask AI to Generate Tests and enter a simple prompt.

For example, ask the AI to generate test cases for Facebook login.

The system instantly produces detailed test cases based on your prompt.

You can also use Gen AI to create test cases from Jira requirements, Figma designs, screenshots, images or even video recordings. This gives your team flexibility to work with any type of input.

2. Create a Test Run

- Go to Test Runs and create a new run.

- Give it a clear name.

- Add the AI generated test cases to the run so they are ready for execution.

3. Execute with Agentic AI

- Open the test run and start the Agentic AI execution by clicking Execute with Atto.

- Atto reads the test run and loads all test cases automatically.

- Click Start on any test you want to execute.

4. Review the Execution Plan

- Atto shows an execution plan for the selected test and lists any required test data.

- Add missing data if needed and click Send so it is added to the test steps.

- Click Start Testing to begin the automated execution.

Atto launches the application, identifies elements and performs each test step.

You can watch the execution in real time as actions are completed.

5. Review and Update Status

After the test finishes, Atto displays a quick summary. You can update the test status immediately and move to the next test.

6. Continue through the Test Run

- Click Start next to another test and let Atto execute it.

- Repeat this until all test cases in the run are completed.

7. View Detailed Results and Report Bugs

- Click View Results to see full step-by-step evidence.

- Expand any step to check screenshots or logs.

- If you find a problem, report it directly to Jira from the results screen.

9. Complete the Test Run

- Exit when all tests are done.

Your run is fully organized, executed, and documented with clarity and less effort.

Conclusion

Test cases are essential for delivering reliable, predictable, and user-ready software. Understanding the test case meaning, applying strong test case design, and avoiding common mistakes help teams build high-quality products. If you want to create, organize, and automate test cases faster, try Test Management by Testsigma and streamline your testing in 2025.

FAQ

Test cases are reviewed through peer review workflows, QA lead checks, and version tracking in test management tools.

They are stored in test management systems where teams can access, edit, and track them easily.

AI helps generate test cases automatically, suggest missing scenarios, auto-heal broken steps, and improve test coverage with less effort.