Prompt Templates for Pro-level test cases

Get prompt-engineered templates that turn requirements into structured test cases, edge cases, and negatives fast every time.

Table Of Contents

- 1 Overview

- 2 What is Test Estimation?

- 3 The Role of Test Estimation in Cost, Quality, and Timelines

- 4 Understanding Test Effort Estimation Through 5 Key Factors

- 5 7 Most Effective Test Estimation Techniques Used by QA Teams

- 6 How to Choose the Right Estimation Technique

- 7 Avoiding the Most Common Test Estimation Pitfalls

- 8 Test Estimation in Agile and Continuous Delivery

- 9 Best Practices for Accurate Software Test Estimation

- 10 Test Estimation Template

- 11 How Testsigma Can Streamline Your Test Estimation

- 12 Improving Test Estimation for Better Software Outcomes

- 13 Frequently Asked Questions

Overview

Software test estimation is the process of predicting the time, effort, and resources required to complete testing activities accurately. It helps QA teams plan realistically, manage risk, and maintain delivery confidence.

7 key test estimation techniques include:

- Work Breakdown Structure (WBS)

- Three-Point Estimation (PERT)

- Function Point Analysis

- Wideband Delphi Method

- Use Case–Based Estimation

- Historical / Analogous Estimation

- Ad-Hoc and Experience-Based Estimation

Deadlines slip, budgets overshoot, testers burn out, and defects leak into production due to weak test estimation.

In fast-moving Agile and DevOps environments, estimation is no longer a one-time planning activity. It’s a continuous decision-making process that directly impacts delivery confidence.

This guide breaks down software test estimation techniques in a decision-driven way, covering what estimation really means, why it matters, and how QA teams can estimate effort with clarity rather than guesswork.

What is Test Estimation?

Test estimation is the process of predicting the effort, time, resources, and cost required to complete testing activities for a software project. It forms a critical part of estimation in software engineering, influencing planning, staffing, release timelines, and risk management.

Unlike development estimation, which often focuses on features and code, test estimation accounts for variability: unclear requirements, environment dependencies, regression scope, and defect rework.

In Agile environments, estimation is iterative and adaptive. In traditional SDLC models, it is more upfront. However, in both cases, inaccurate estimates create real business consequences.

Underestimation leads to rushed testing and compromises in quality. Overestimation inflates budgets and erodes stakeholder trust. That’s why estimation techniques in software engineering must be context-aware, not formula-driven.

The Role of Test Estimation in Cost, Quality, and Timelines

Accurate estimation is not about predicting the future perfectly; it’s about reducing uncertainty to an acceptable level.

When done well, test estimation directly impacts:

- Budget forecasting: Prevents unplanned QA cost escalations

- Resource allocation: Ensures the right skill mix at the right time

- Delivery timelines: Aligns testing windows with release goals

- Product quality: Reduces defect leakage and production incidents

Industry studies consistently show that fixing defects in production can cost 5-10x more than fixing them during testing. Poor estimates often compress testing cycles, increasing this risk.

In short, effort estimation techniques act as a quality safeguard.

understanding Test Effort Estimation through 5 Key Factors

No estimation model works in isolation. Effective software estimation techniques consider multiple variables:

- Project Scope and Complexity: Larger applications, complex integrations, third-party dependencies, and legacy systems significantly expand the testing scope and increase regression depth. They also introduce hidden risks and interdependencies that inflate overall testing effort and planning buffers.

- Types of Testing Required: Effort varies across manual, automated, functional, non-functional, performance, security, and accessibility testing. A manual testing estimation model can perform well for UI-heavy or exploratory work.

- Team Skill Levels and Structure: Experienced testers and automation engineers execute faster with fewer defects. Distributed or cross-functional teams introduce coordination overhead that must be reflected in effort estimates.

- Historical Data and Tooling: Reliable historical metrics, defect trends, and mature testing tools improve estimation accuracy, while missing data or unstable environments increase uncertainty and buffer requirements.

- Requirement Stability: Frequent requirement changes, evolving acceptance criteria, or late clarifications require contingency buffers. Estimation without change tolerance quickly becomes unrealistic and brittle.

7 Most Effective Test Estimation Techniques Used by QA Teams

Strong core test estimation techniques help QA teams move from assumptions to defensible, explainable effort projections.

A quick comparison table:

| Estimation Technique | Ideal Use Case | Complexity | Accuracy | Collaboration | Tooling |

| Work Breakdown Structure (WBS) | Well-defined projects with clear testing tasks | Medium | High | Low-medium | Basic planning tools, spreadsheets |

| Three-Point Estimation (PERT) | Projects with uncertainty or risk variability | Medium | Medium-high | Medium | Planning tools or calculators |

| Function Point Analysis | Large, complex enterprise applications | High | High | Low | Specialized estimation tools |

| Wideband Delphi | New domains or limited historical data | Medium | High | High | Facilitation tools, collaboration platforms |

| Use Case-Based Estimation | Agile projects with defined user journeys | Medium | Medium | Medium | Backlog or test management tools |

| Historical/Analogous Estimation | Repeat projects or mature products | Low | Medium-high | Low | Historical data repositories, dashboards |

| Ad-Hoc/ Experience-Based | Small, low-risk tasks | Low | Low | Low | None |

1. Work Breakdown Structure (Wbs)

WBS breaks testing into granular, estimable tasks: test design, environment setup, execution, defect retesting, regression, and reporting.

How it works:

Decompose testing activities into small units

↓

Estimate the effort for each task

↓

Aggregate totals with contingency buffers

Advantages:

- High transparency

- Easy stakeholder communication

Pitfalls:

- Time-consuming upfront

- Misses unknown risks if over-detailed

WBS is often combined with other software development estimation techniques for accuracy.

2. Three-Point Estimation (Pert)

This technique reduces optimism bias by estimating three values:

- Optimistic (O)

- Most likely (M)

- Pessimistic (P)

Formula:

(O + 4M + P) / 6

Example:

If test execution may take 6 days (O), usually takes 8 days (M), and could stretch to 14 days (P):

(6 + 4×8 + 14) / 6 = 8.7 days

PERT is widely used in effort estimation techniques where uncertainty is high.

3. Function Point Analysis

Best suited for large, complex systems, this method estimates based on system functionality rather than tasks.

Steps include:

- Identifying inputs, outputs, inquiries, files, and interfaces

- Assigning complexity weights

- Mapping function points to test effort

It aligns well with enterprise-scale estimation techniques in software engineering, though it requires trained estimators.

4. Wideband Delphi Method

A collaborative, expert-driven approach where multiple estimators independently estimate effort, then converge through discussion.

When to use it:

- New domains

- Limited historical data

- High business risk projects

Facilitation tips:

- Keep estimations anonymous initially

- Focus discussions on assumptions, not numbers

Wideband Delphi improves accuracy through collective intelligence, not consensus pressure.

5. Use Case-Based Estimation

This technique maps use cases or user journeys directly to the test effort.

Each use case is evaluated based on:

- Complexity

- Data variations

- Integration depth

It works well for Agile teams, estimating sprint-level estimation for 100% test coverage goals without over-engineering.

6. Historical / Analogous Estimation

This technique estimates test effort by comparing the current project with similar past projects using validated historical data. Teams apply structured effort estimation templates and adjust for differences in scope, complexity, and risk.

It is one of the fastest software estimation techniques, but its accuracy depends on the relevance, consistency, and quality of historical data used.

7. Ad-Hoc and Experience-Based Estimation

This method estimates testing effort based on personal judgment and past experience rather than documented data, defined metrics, or formal estimation models.

While it may work for small, low-risk tasks, it lacks transparency and repeatability, and should not be used as a primary estimation method.

How to Choose the Right Estimation Technique

There’s no universal “best” method.

Use this quick checklist to decide which estimation approach fits your project best:

Early-stage or unclear requirements?

Use expert-based or Wideband Delphi methods to account for uncertainty.

Well-defined scope and stable requirements?

Choose Work Breakdown Structure or historical estimation for higher accuracy.

Limited historical data available?

Apply Three-Point Estimation (PERT) to balance optimism and risk.

Mature team with past project metrics?

Leverage historical or analogous estimation using standardized templates.

Agile or iterative delivery model?

Use relative estimation methods like story points or use case–based estimation.

High stakeholder visibility or reporting needs?

Prefer structured, explainable techniques that provide traceability.

Most mature teams blend multiple software development estimation techniques rather than relying on one.

Avoiding the Most Common Test Estimation Pitfalls

Even well-structured estimation techniques can fail if common planning blind spots are ignored. Here are the most common ones:

- Ignoring regression testing and re-testing effort: Teams often estimate only initial test execution, overlooking regression cycles and defect re-testing, which grow significantly with each release and must be explicitly planned.

- Excluding non-functional testing: Performance, security, usability, and accessibility testing are frequently underestimated or skipped in estimates, leading to late-stage delays and unexpected quality risks.

- Failing to add buffers for change: Requirement changes, environment issues, and defect rework are inevitable. Estimates without contingency buffers quickly become unrealistic and pressure teams into cutting testing scope.

- Treating estimates as fixed commitments: When estimates are treated as promises rather than forecasts, teams lose flexibility. Estimates should evolve as the scope, risk, and requirements change.

Avoid these by revisiting estimates regularly and tracking estimates vs actuals.

Test Estimation in Agile and Continuous Delivery

Agile teams use relative estimation models like:

| Technique | What It Measures | Best Used When | Key Benefit |

| Story Points | Relative effort and complexity | Work is iterative, and team velocity is known | Encourages consistency over time-based guesses |

| T-Shirt Sizing | Rough size categories (XS-XL) | Early planning or high-level backlog grooming | Fast, low-friction estimation for large backlogs |

| Spikes | Time-boxed research for unknowns | Requirements, tools, or integrations are unclear | Reduces risk by replacing assumptions with learning |

Best Practices for Accurate Software Test Estimation

Together with early tester involvement and automation, these practices help teams move from optimistic guesses to defensible, data-driven software test estimates.

- Break testing work into small, clearly defined tasks to improve estimation accuracy and traceability.

- Include regression, re-testing, and environment setup explicitly in every estimate.

- Factor in team skill levels, onboarding time, and knowledge transfer when planning effort.

- Add risk-based buffers for requirement volatility, integrations, and third-party dependencies.

- Track estimates versus actuals consistently to refine future estimates using real data.

- Revalidate estimates after major scope, tooling, or release plan changes.

Test Estimation Template

A simple test estimation template helps teams plan effort clearly and improve accuracy over time. Each testing activity is logged with a few essential fields:

- Test type (functional, regression, automation, performance)

- Complexity level (low, medium, high)

- Estimated hours planned before execution

- Actual hours spent after completion

Make estimation repeatable and defensible. Use our detailed test estimation template to bring structure, visibility, and learning into your QA planning process.

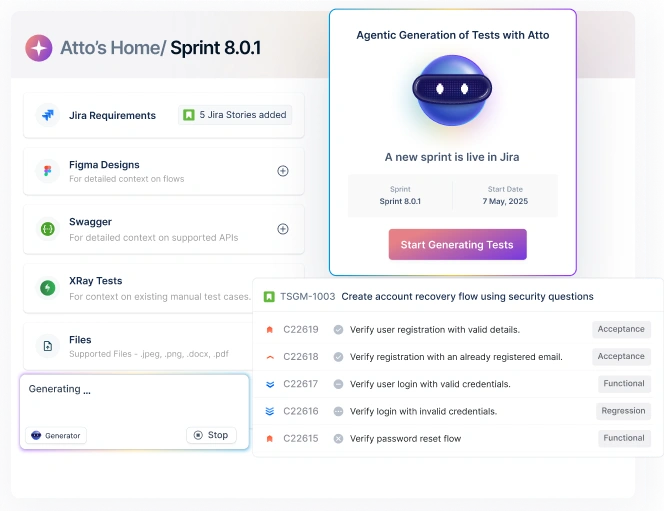

How Testsigma Can Streamline Your Test Estimation

Modern testing platforms now apply AI-driven analytics to make estimation more accurate and adaptive. By learning from execution history, environment stability, and defect trends, these tools help teams move beyond manual guesswork.

With Testsigma, QA teams can:

- Analyze historical test execution data to create more reliable effort baselines

- Compare estimated versus actual effort across releases to continuously refine predictions

- Identify regression-heavy areas and factor recurring effort into future estimates

- Adjust estimates dynamically based on environment stability and failure patterns

- Integrate estimation insights directly with test planning and execution workflows

By combining historical intelligence with planning tools, platforms like Testsigma help teams improve forecasting accuracy, reduce surprises, and plan testing effort with greater confidence.

Improving Test Estimation for Better Software Outcomes

Test estimation is about making informed decisions under uncertainty, not predicting exact outcomes.

Teams that treat estimation as a continuous, data-backed process can plan resources more effectively, manage change proactively, and reduce last-minute quality risks.

Choosing the right test estimation techniques strengthens delivery confidence and improves outcomes across the entire software lifecycle.

When estimation improves, everything downstream does too.

Frequently Asked Questions

No single model fits every scenario. Hybrid approaches that combine data-driven, task-based, and expert techniques usually deliver the most reliable estimates.

Estimates should be reviewed at every major scope change, sprint planning cycle, or release milestone to reflect evolving requirements and risks.

Not always. Automation becomes cost-effective only when script maintenance, environment stability, and long-term reuse are included in effort calculations.

Historical execution time, defect density, regression effort, and estimate-versus-actual trends provide the strongest signals for refining future estimates.

Yes, performance, security, and usability testing have different effort drivers and should be estimated independently to avoid underestimating the overall test scope.