“Acceptance testing is not just about finding bugs. It is about ensuring the software is ready for users.” – Michael Bolton, Software tester.

Table Of Contents

- 1 Introduction

- 2 What is Acceptance Testing?

- 3 Why Acceptance Testing Is Critical

- 4 When to Perform Acceptance Testing

- 5 Types of Acceptance Testing With Practical Examples

- 6 The Acceptance Testing Process: Clear Step-by-Step Roadmap

- 7 How to Write Effective Acceptance Tests

- 8 How To Perform Acceptance Testing Using Testsigma

- 9 Entry and Exit Criteria for Acceptance Testing

- 10 Manual vs Automated Acceptance Testing: When and Why

- 11 Acceptance Testing in Agile, DevOps, and Modern SDLC

- 12 Limitations of Acceptance Testing

- 13 Best Practices for Streamlining Acceptance Testing

- 14 Common Challenges of Acceptance Testing

- 15 Top 5 Acceptance Testing Tools & Frameworks

- 16 Acceptance Testing vs Other Testing Types

- 17 Metrics and KPIs for Acceptance Testing Success

- 18 FAQs

Introduction

In 2025, release cycles are shorter, user expectations are brutal, and one bad rollout can undo months of trust. If you’re in QA, acceptance testing isn’t optional, instead, it’s your last, best chance to prove the product works for real people in real situations.

What is Acceptance Testing?

Acceptance testing is the process of validating whether a software application meets the predefined acceptance criteria and is ready for release. It focuses on verifying that the product delivers the intended business value, functions correctly in real-world conditions, and satisfies stakeholder expectations.

Most people think acceptance testing is a box to tick at the end of a project. But it’s actually the moment of truth, the point where you stop asking “does it work in theory?” and start asking “does it work for the people who matter?”

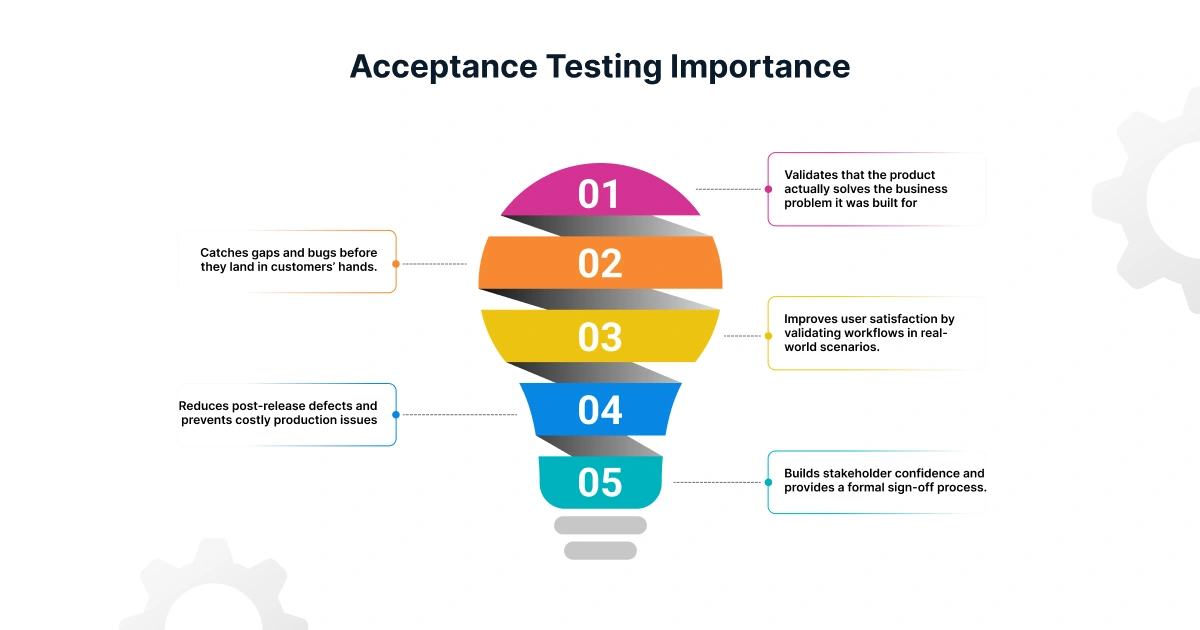

Why Acceptance Testing is Critical

Here’s why skipping acceptance testing could be a trouble:

- Validates that the product actually solves the business problem it was built for.

- Catches gaps and bugs before they land in customers’ hands.

- Improves user satisfaction by validating workflows in real-world scenarios.

- Reduces post-release defects and prevents costly production issues

- Builds stakeholder confidence and provides a formal sign-off process.

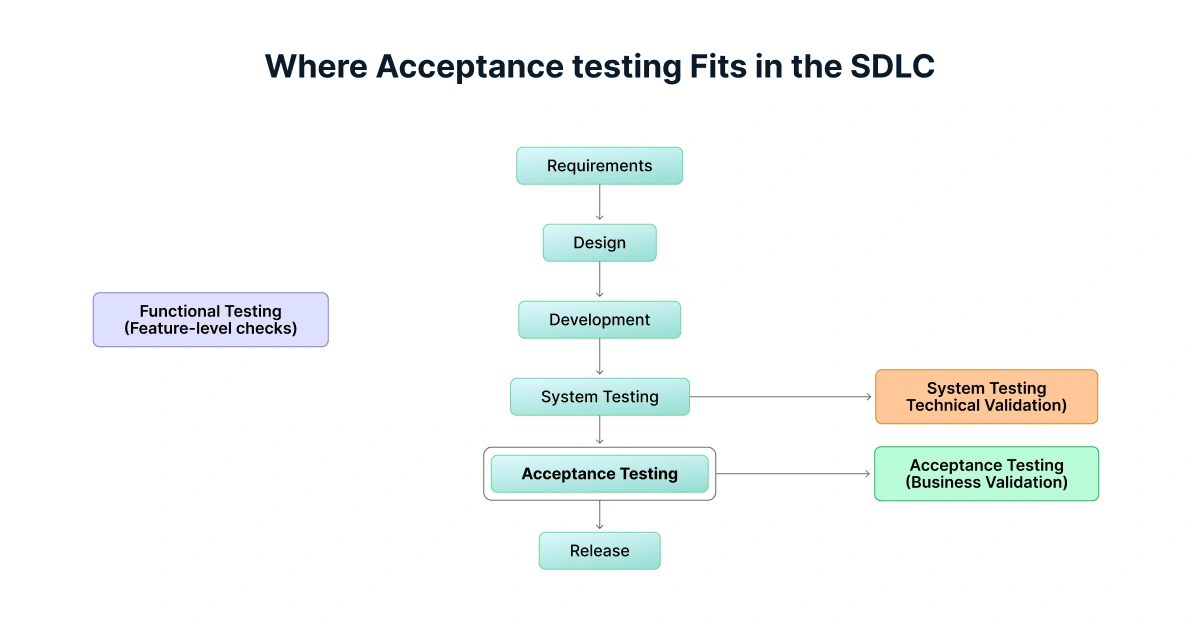

When to Perform Acceptance Testing

Acceptance testing is typically performed:

- At the end of the development cycle, before production release.

- After system and integration testing have confirmed technical stability.

- Before user onboarding or customer rollout.

Types of Acceptance Testing with Practical Examples

From validating business workflows with end users to ensuring systems meet regulatory standards, each acceptance testing type has its own purpose and real-world use cases.

User Acceptance Testing (UAT)

Conducted by end-users to verify the product works as intended in business workflows.

Example: A sales team tests a CRM’s lead assignment feature with real data before launch.

Alpha and Beta Testing

Alpha Testing: Internal testing by the development or QA team.

Beta Testing: Testing by a select group of real users.

Example: A beta group uses a new mobile app to find bugs missed in internal QA.

Operational/production Acceptance Testing

Checks system operability, backup, recovery, and monitoring readiness.

Example: Testing failover in a cloud-hosted application.

Contract and Regulatory Acceptance Testing

Verifies compliance with contractual obligations or regulatory requirements.

Example: Financial software validated against updated tax compliance laws.

Compliance, Performance, and Security Acceptance Testing

Ensures non-functional requirements are met before go-live.

Example: Ensuring a retail site can handle 20000 concurrent users simultaneously without slowing down.

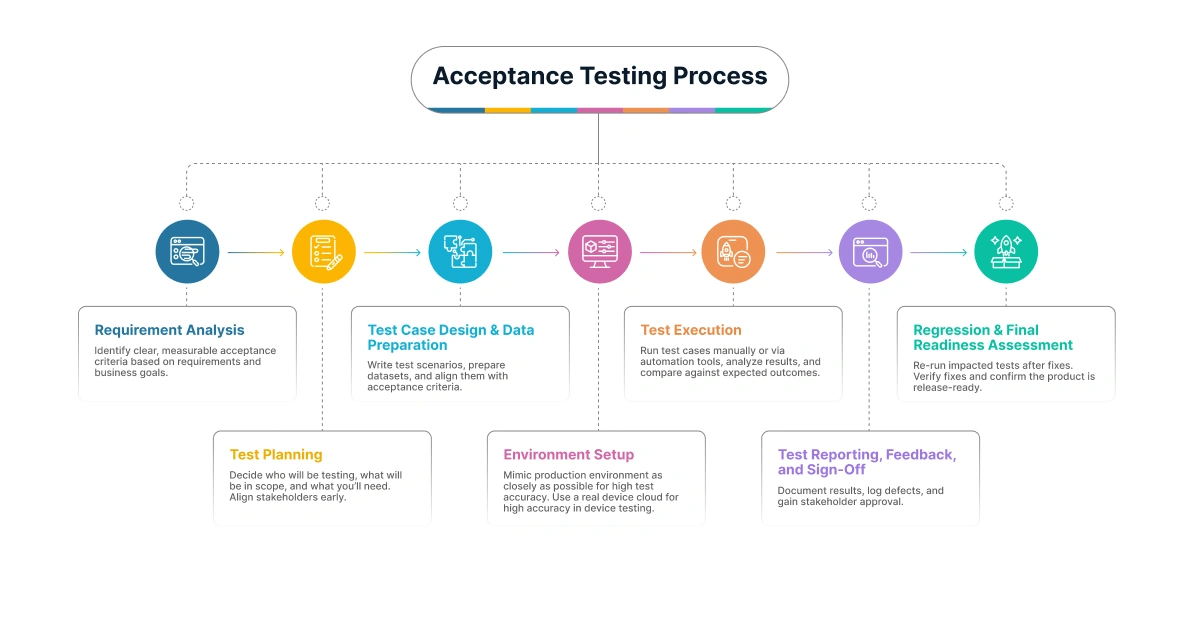

The Acceptance Testing Process: Clear Step-by-step Roadmap

Here is a step-by-step process to ensure nothing is missed and every release meets real user expectations.

1. Requirement Analysis: Identify clear, measurable acceptance criteria based on requirements and business goals.

2. Test Planning: Decide who will be testing, what will be in scope, and what you’ll need. Align stakeholders early.

3. Test Case Design & Data Preparation: Write test scenarios, prepare datasets, and align them with acceptance criteria.

4. Environment Setup: Mimic production environment as closely as possible for high test accuracy. Use a real device cloud for high accuracy in device testing.

5. Test Execution: Run test cases manually or via automation tools, analyze results, and compare against expected outcomes.

6. Test Reporting, Feedback, and Sign-Off: Document results, log defects, and gain stakeholder approval.

7. Regression & Final Readiness Assessment: Re-run impacted tests after fixes. Verify fixes and confirm the product is release-ready.

How to Write Effective Acceptance Tests

Write clear, measurable statements defining the conditions under which a feature is accepted. Here’s how you can do it:

User Story: As a user, I want to reset my password so that I can regain access to my account.

Acceptance Criteria:

- A Forgot Password link is available on the login page

- User receives a password reset email after entering a registered email address

- Reset link expires after 30 minutes

- User can set a new password and successfully log in with it

How can Testsigma help: You can write this as plain-language steps, run it on real devices in the cloud, and integrate directly with CI/CD for continuous testing.

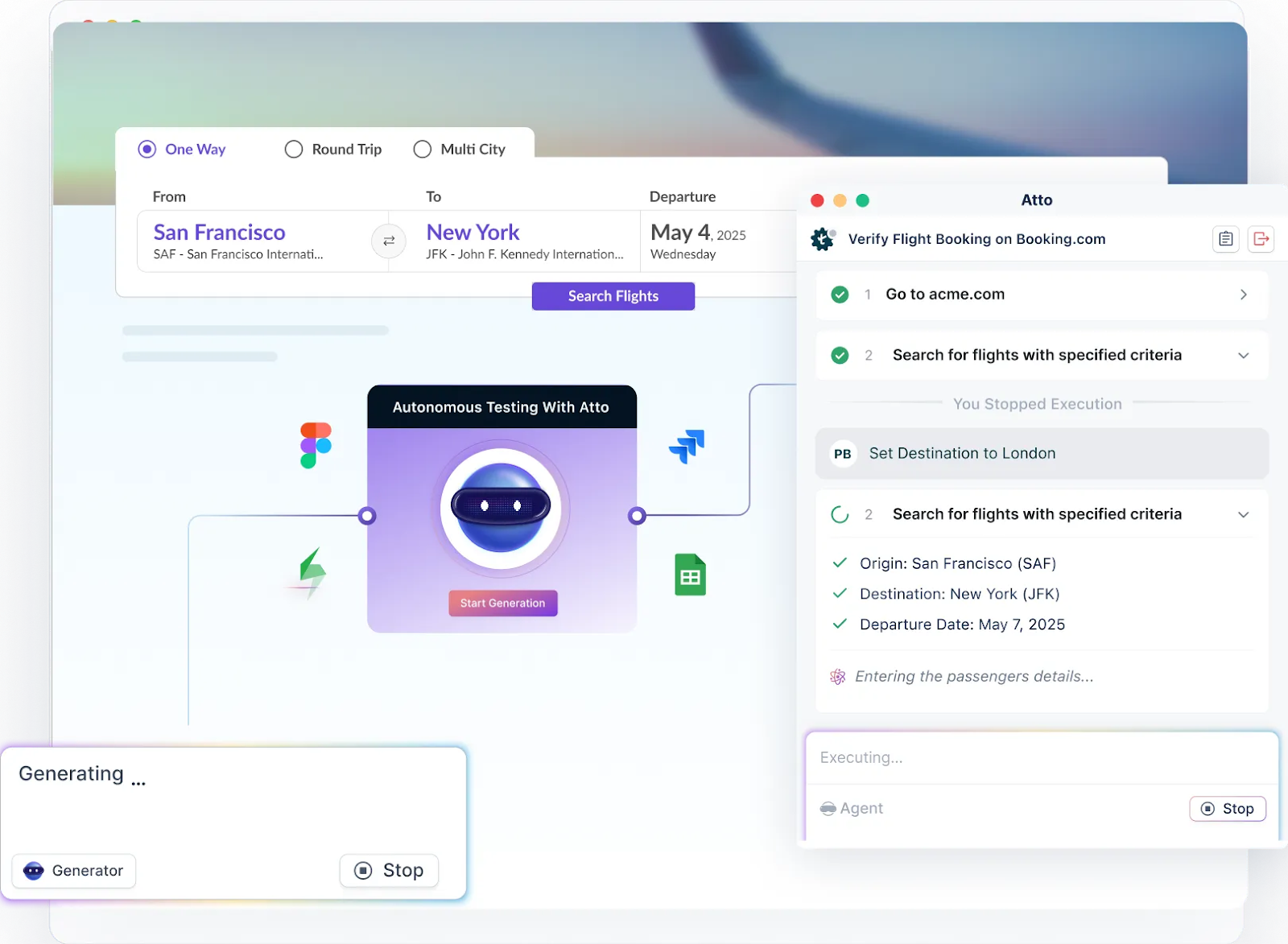

How to Perform Acceptance Testing Using Testsigma

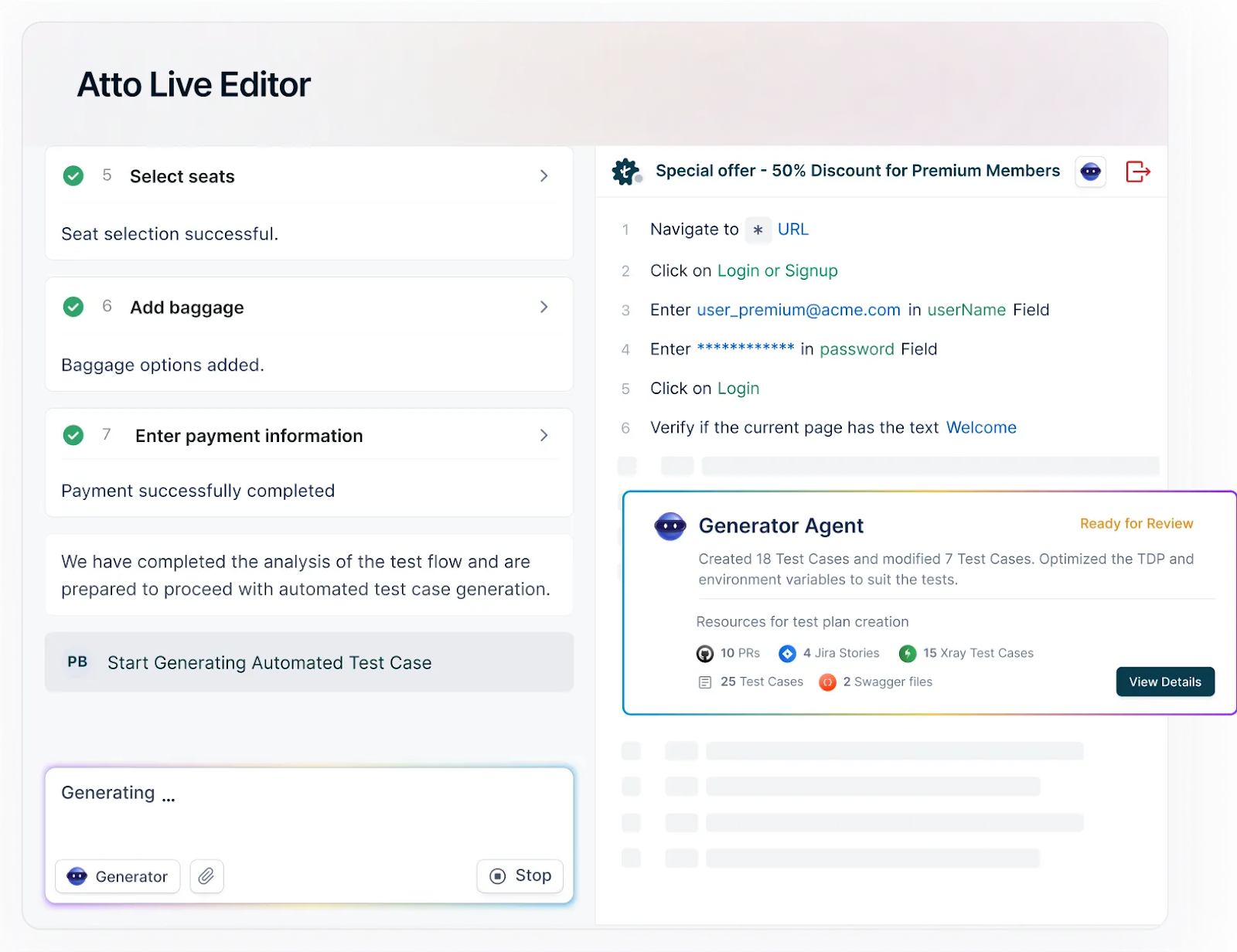

Using Testsigma, you can create acceptance test cases using plain English without coding knowledge. Using Atto and Copilot, you can generate test cases in seconds from prompts, JIRA user stories, Figma designs, screenshots, docs, PDFs, videos, images, etc.

Step 1: Create a free account to access the cloud platform.

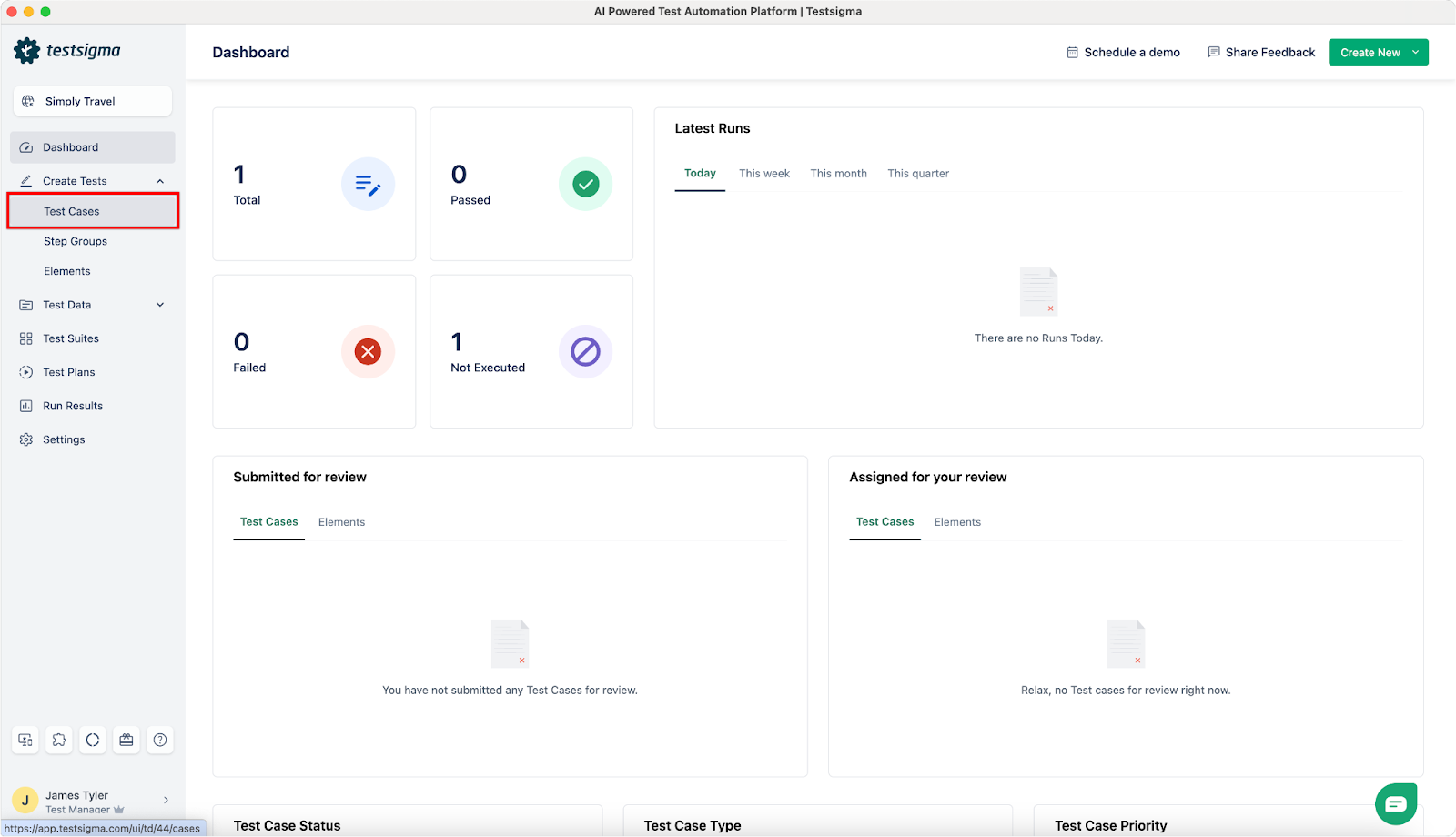

Step 2: Once you sign in, you will see a dashboard like the one below. Click on Create tests -> Test Case

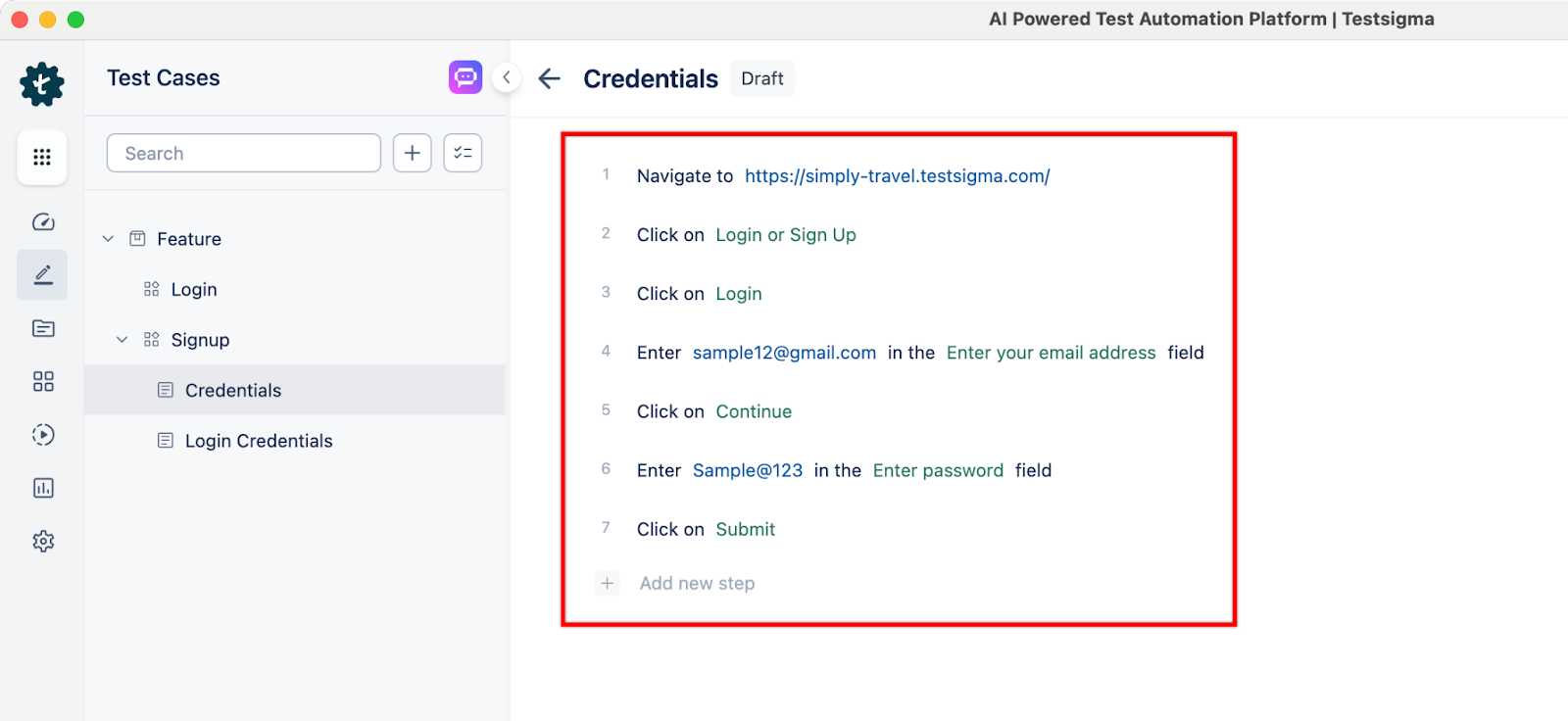

Step 3: Use NLPs to create test steps as per your test case scenario.

Step 4: Once test cases are created, click on Run to choose which test environment to execute tests on. Click on Run to execute tests.

Step 5: Once test cases are executed, you will get detailed automated test reports with logs, videos, screenshots, and detailed test results.

Entry and Exit Criteria for Acceptance Testing

What should QAs be aware of when it comes to entry and exit criteria for acceptance testing:

Entry Criteria:

- All requirements have been documented and analyzed.

- A test plan has been created and approved.

- The test environment is set up and ready to use.

- All test cases have been designed and reviewed.

- The necessary test data has been prepared.

- System and integration tests passed.

- All acceptance criteria documented and approved.

- No critical defects open.

Exit Criteria:

- All acceptance test cases have been executed.

- All defects have been identified and reported.

- All critical defects have been fixed.

- The software meets all of the acceptance criteria.

- The software is ready for user acceptance and deployment.

Manual Vs Automated Acceptance Testing: When and Why

Most QA teams face this dilemma: Do we run acceptance tests manually, or should we automate them? A breakdown of when and what works best:

When to Choose Manual Acceptance Testing

Manual testing works best when the goal is to validate user experience or subjective business logic.

- Exploratory testing: Manual testers can easily spot usability gaps that automation can not.

- One-off tests: Validating rare workflows that aren’t worth the automation effort.

When to Automate Acceptance Testing

Automation becomes crucial when speed and scalability come into play.

- Regression testing: Running the same acceptance suite every sprint or release.

- Complex systems: Ensuring multiple integrations and APIs behave consistently.

- CI/CD pipelines: Integrating acceptance tests so every build gets real-time validation.

- High test coverage: Reducing human error and ensuring no critical test scenario is missed.

Why Modern QA Teams Are Shifting to Automation

Most QA teams don’t choose between manual and automated but blend both. But automation has a clear edge when:

- Releases are frequent.

- Teams are distributed, and collaboration depends on consistent, shareable test cases.

- Non-coders need to contribute. Platforms like Testsigma make test automation accessible using codeless and AI-based test generation techniques.

Acceptance Testing in Agile, Devops, and Modern SDLC

Modern software delivery requires acceptance testing to move earlier in the SDLC and become continuous for faster releases.

- In Agile, acceptance testing isn’t just a QA task. It’s shared across developers, testers, product owners, and business stakeholders.

- In a DevOps pipeline, acceptance tests are automated and run as part of CI/CD workflows. Every new build or deployment automatically runs acceptance tests, giving immediate feedback on whether business-critical flows are still intact. This prevents bugs from slipping into production and reduces the risk of potential issues.

- Modern SDLC: Acceptance testing is no longer a one-time activity at the end of development. It’s a part of a shift-left testing strategy, starting earlier in the SDLC.

No-code testing platforms like Testsigma make it easier to integrate acceptance testing into CI/CD pipelines for continuous testing without requiring coding knowledge.

Limitations of Acceptance Testing

Some of the disadvantages of acceptance testing are:

- User knowledge requirements: Users must have basic knowledge about the software to participate effectively in this testing.

- Low user participation: Some users may be reluctant to participate in acceptance testing because they need more time or are uninterested.

- Slow feedback: It can take time to collect and analyze feedback from a large group of users, especially if their opinions differ.

- Development team involvement: The development team is typically not involved in acceptance testing, which can lead to communication gaps and misunderstandings.

Best Practices for Streamlining Acceptance Testing

Acceptance testing can easily become a bottleneck if it’s treated as an afterthought. The smartest QA teams streamline the process by focusing on clarity, collaboration, and the right level of automation. Here are some of the best practices:

1. Involve Stakeholders Early

Don’t wait until the end of the project to bring in business users or product owners. Involve them while defining acceptance criteria so tests align with real business needs.

2. Write Clear, Reusable Tests

Invest time in writing acceptance tests that are modular and reusable across sprints and releases. This makes maintenance easier and avoids reinventing the wheel every time requirements shift.

3. Test on Real Devices

Simulated conditions only go so far. Run acceptance tests with real-world test data and real devices to uncover issues that wouldn’t appear in a lab setting.

4. Foster Cross-Team Collaboration

Acceptance testing shouldn’t live in a QA silo. Developers, testers, business analysts, and even end-users should collaborate to validate functionality against expectations.

5. Embrace AI, Cloud, and Automation Tools

Manual acceptance testing is valuable for usability and edge cases, but automation ensures consistency and speed for wider test coverage. Modern platforms like Testsigma let you scale tests, integrate into CI/CD pipeline, empowering testers to test continuously.

Common Challenges of Acceptance Testing

Like any other testing process, acceptance testing in software testing has its challenges.

- Unclear or ambiguous requirements can lead to misunderstandings between stakeholders and testing teams.

- Achieving comprehensive test coverage for all possible scenarios and user interactions is challenging.

- Frequent changes in requirements during the development process can impact test planning and execution.

- The difficulty in obtaining realistic and comprehensive test data for testing various scenarios is a huge challenge.

- Coordinating and scheduling involvement from actual end-users for testing can be logistically challenging.

- The subjective nature of user acceptance can introduce variability in interpretation and evaluation.

- Ensuring compatibility with testing tools and environments can be problematic, impacting the test execution.

Top 5 Acceptance Testing Tools & Frameworks

Some of the leading acceptance testing tools and frameworks are as follows:

1. Testsigma

A cloud-based, codeles,s Agentic AI-driven test automation platform that allows testers to automate web, mobile, API, desktop, SAP, Salesforce, and ERP application testing. Testsigma lets you write test cases in simple English without requiring any code or setup. It integrates seamlessly into CI/CD pipelines and allows even non-technical team members to create automated tests.

With its Agentic AI capabilities, QA teams can plan, create, execute, analyze, and maintain tests using different AI agents. It is perfect for scaling acceptance testing without heavy coding. Using Testsigma, you can run your tests on 3000+ real environments for comprehensive device coverage, high test accuracy, and test coverage.

2. Cucumber

A widely used Behavior-Driven Development framework that allows testers to write tests in plain English using Gherkin syntax. Cucumber supports multiple languages, such as Java, JavaScript, Python, and Ruby. It is great for improving collaboration between QA, developers, and business analysts.

3. Selenium

One of the most established test automation frameworks for browser-based acceptance testing. Selenium provides powerful APIs to simulate user interactions and validate workflows across browsers. It supports multiple programming languages such as Java, Python, C#, etc., and is highly flexible. But it often requires significant coding and setup compared to low-code/no-code tools.

4. Specflow

A .NET-focused acceptance testing framework, similar to Cucumber but tailored for Microsoft ecosystems. It supports BDD and allows tests to be written in natural language, then executed as automated tests within Visual Studio. Ideal for QA teams in enterprises that are heavily invested in .NET technologies.

5. Robot Framework

A keyword-driven acceptance testing framework that’s versatile and extensible. Robot Framework supports Python, Java, and JavaScript libraries, making it suitable for a wide range of applications.

A detailed breakdown of each tool is as follows:

| Tool / Framework | Best For | Pros | Cons |

| Testsigma | Teams wanting fast, scalable, codeless platform with powerful AI capabilities | Write tests in plain EnglishWorks across web, mobile, API, desktop, Salesforce, SAP, and ERPsQuick setup, CI/CD ready | Not open sourceLimited if you want deep custom scripting |

| Cucumber | BDD-driven teams needing strong collaboration between business & tech | Multi-language supportStrong community | Requires coding skills for step definitions Setup can be complex |

| Selenium | Code-heavy teams needing full control for browser-based testing | FlexibleWide language support Open-source & strong community | Steep learning curve Requires frameworks/libraries for reporting & maintenance |

| SpecFlow | QA teams in .NET environments using BDD | Seamless integration with Visual Studio Strong fit for Microsoft stack | Limited outside .NET ecosystem Requires coding for automation |

| Robot Framework | Teams preferring keyword-driven acceptance testing with high reusability | Easy to read/write with keywords Extensible with Python/Java/JS libraries | Limited advanced UI testing support Setup can be time-consuming |

Acceptance Testing Vs Other Testing Types

Most QA teams know acceptance testing is about validating readiness for release, but confusion often creeps in. Is it the same as system testing? How is it different from functional testing? And where do regression or beta tests fit in? Below is a detailed breakdown of the most common mix-ups QAs face and the difference between acceptance testing and other types:

| Type | What QAs Confuse | Key Difference | Example |

| System Testing | Both test the full system. | System testing validates technical correctness. Acceptance testing validates business fit. | System testing checks login works across browsers. Acceptance testing confirms a user can successfully log in and access their dashboard to do actual work. |

| Functional Testing | Both validate features against requirements. | Functional testing is feature-level. Acceptance testing is workflow-level. | Functional: Does the ‘Reset Password’ button send an email? Acceptance: Can the user reset their password and log back in successfully? |

| Regression Testing | Both run before release. | Regression ensures changes don’t break old features. Acceptance ensures the product is ready for real users. | Regression: New search filter doesn’t break product listing. Acceptance: Customer can still complete checkout smoothly. |

| Beta Testing | Both involve real users | Beta testing is exploratory by external usersAcceptance testing is structured testing by business stakeholders. | Beta Testing: Real customers try the new app version before official launch.Acceptance Testing: Business analyst tests order approval workflow. |

| UAT Testing | Both are same | User Acceptance Testing is a subtype of acceptance testing | UAT: It is usually performed by real users Acceptance: Usually performed by stakeholders before release/ |

Metrics and KPIs for Acceptance Testing Success

Metrics QA teams should look into to calculate their acceptance testing success:

- Test Coverage (%) = (Number of Covered Elements / Total Number of Elements) x 100

- Defect Leakage (%) = (Defects found after release / Total defects found) x 100

- Feedback Cycle Time = End Time – Start Time

- Customer Satisfaction Score (CSAT) = (Number of Satisfied Responses / Total Number of Responses) x 100

FAQs

Typically, business stakeholders, product owners, and QA leads collaborate to define acceptance criteria and test scope.

A retailer testing the e-commerce checkout process with live payment gateways before launch.

QA process covers the entire testing process, while acceptance testing is a specific stage focused on verifying readiness against agreed criteria.