Prompt Templates for Pro-level test cases

Stop fluffy output. Use proven prompt templates that force structure, coverage, and failure paths, so GenAI acts like a QA partner.

Table Of Contents

- 1 Key Takeaways

- 2 Generative AI in Software Testing: Implementation & Its Future

- 3 What Is Generative AI in Software Testing?

- 4 How Is Generative AI Transforming QA in 2025?

- 5 How Is GenAI-Based Testing Different From Traditional Test Automation?

- 6 What Are the Benefits of Generative AI in QA?

- 7 What Are the Leading Generative AI Testing Tools in 2025?

- 8 How to Implement Generative AI in Your Testing Workflow With Testsigma

- 9 What GenAI Capabilities Does Testsigma Offer?

- 10 What Are the Challenges of Generative AI in QA?

- 11 What Is the Future of Generative AI in Software Testing?

- 12 Conclusion

- 13 Frequently Asked Questions

Key Takeaways

- Generative AI in software testing uses LLMs and AI models to automatically create test cases, scripts, test data, and scenarios from requirements or prompts.

- Unlike traditional automation that runs tests you write, GenAI writes tests you never thought of and adapts when your application changes.

- Teams using GenAI report significant productivity gains on tasks like script generation, maintenance, and defect analysis.

- The biggest challenges include irrelevant test generation, compute overhead, and training data quality, all of which require planning upfront.

- Testsigma brings 7 GenAI capabilities into a single platform, from natural language test generation and autonomous agents to self-healing and CI/CD integration.

Generative AI in Software Testing: Implementation & Its Future

Generative AI in software testing uses AI models to automatically create test cases, scripts, test data, and scenarios from requirements or prompts, with no manual scripting. The market for it is projected to grow from $48.9 million in 2024 to $351.4 million by 2034 at a 21.8% CAGR (Market.us). With testing consuming 15-25% of project budgets, GenAI offers a way to cut that overhead significantly. The teams adopting it now are seeing results that early-wave tool experiments never could.

What is Generative AI in Software Testing?

Generative AI in software testing is the use of large language models (LLMs), transformer architectures, and multimodal AI to automatically generate test cases, scripts, synthetic test data, and bug reports, by analyzing requirements, code, UI definitions, API schemas, and historical test logs.

Unlike traditional test automation, which executes a predefined script, generative AI creates new test artifacts on demand. It adapts to application changes, generates edge-case coverage that human testers miss, and continuously learns from test execution history to improve its own outputs.

How is Generative AI Transforming QA in 2025?

QA has evolved through five distinct phases, each one compressing the manual effort of the last:

| Phase | What Changed | Remaining Limitation |

| Manual Testing | Human testers ran and documented every test case | Slow, error-prone, not scalable |

| Script-Based Automation | Frameworks like Selenium automated repetitive flows | Required coding; high maintenance cost |

| Codeless Automation | Platforms like Testsigma enabled no-code test design | Still required human input to design tests |

| Generative AI for Testing | GenAI creates test scripts automatically from requirements, UIs, stories | Outputs required human validation |

| Agentic AI Testing | Autonomous agents plan, generate, execute, heal, analyze, and report | Early-stage integration overhead for legacy stacks |

The current frontier, Agentic AI testing, goes beyond test generation. Platforms like Testsigma deploy specialized agents that don’t just write tests, but optimize, self-heal broken cases, analyze failures, and plan next steps autonomously across the full QA cycle.

How is GenAI-Based Testing Different From Traditional Test Automation?

Traditional automation runs the tests you write; GenAI writes the tests you never thought of.

| Capability | Traditional Automation | Generative AI Testing |

| Test creation | Manual scripting by engineers | Auto-generated from NL, Figma, JIRA, videos, docs |

| Script maintenance | Manual fix every time the UI changes | Self-healing agents detect and repair broken scripts |

| Test coverage | Limited to paths the tester thought to script | AI surfaces edge cases and untested user flows automatically |

| Accessibility | Requires coding skills | Plain English: any team member can create tests |

| Defect analysis | Tester manually reviews failure logs | AI classifies severity, captures logs, maps root cause |

| Adaptability | Breaks when the app changes | ML models retrain on new behavior and adapt |

| CI/CD fit | Needs manual triggering or scripted pipelines | Native integration, tests auto-trigger on code commit |

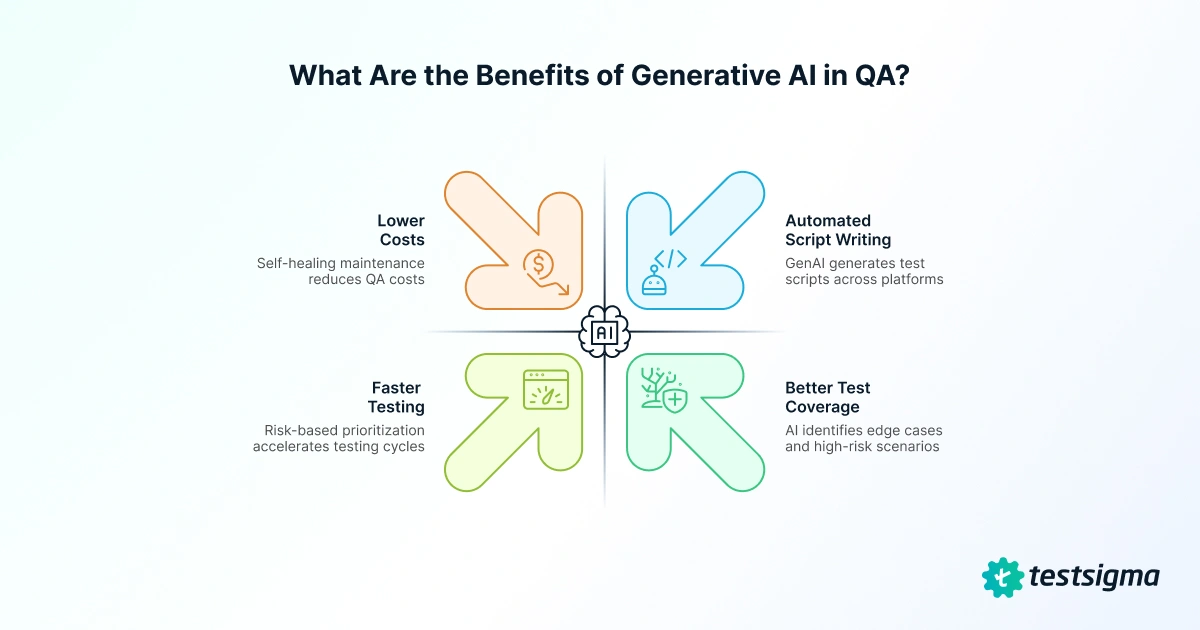

What Are the Benefits of Generative AI in QA?

GenAI doesn’t just automate testing, it rethinks how testing gets done across the entire QA workflow.

- Automated script writing: GenAI analyzes app structure and requirements to generate test scripts across web, mobile, API, and desktop, cutting test creation time by up to 80% (AWS).

- Better test coverage: It studies workflows, session logs, and bug history to surface edge cases humans miss. 63% of enterprises struggle with test automation scalability (World Quality Report 2023), and GenAI addresses that without adding headcount.

- Faster testing: IBM reports 30-40% productivity gains on testing tasks like script generation and maintenance. Risk-based prioritization and parallel execution compress cycles further.

- Lower costs: Fewer scripting hours, self-healing maintenance, and earlier defect detection (fixing bugs pre-release is 10-100x cheaper) all compound. TCS reported GenAI cut product development cycles by up to 20% in QA functions.

What Are the Leading Generative AI Testing Tools in 2025?

The market is crowded, but a few platforms stand out for what they actually deliver in production.

| Tool | Best For | GenAI Differentiator | Testing Types |

| Testsigma | Unified no-code agentic testing | 7 specialized AI agents (Atto crew); NL test creation from prompts, Figma, JIRA, videos | Web, Mobile, Desktop, API, SAP, Salesforce |

| Katalon | Mixed-skill teams | Self-healing scripts; AI analytics for predictive insights | Web, Mobile, Desktop, API |

| Tricentis TOSCA | Enterprise end-to-end testing | Risk-based test prioritization; smart test data generation | Web, Mobile, API, SAP, ERP |

| Perfecto Scriptless | Regression & web testing | GPT-driven test case suggestions | Web, Mobile |

| Appvance | Exploratory coverage expansion | AI-driven application path exploration; visual health charts | Web, Mobile, API |

How to Implement Generative AI in Your Testing Workflow with Testsigma

Follow this 9-step path to deploy GenAI testing with Testsigma:

Assess Your Readiness

Audit your current automation coverage, test data quality, and CI/CD maturity.

Choose Your Platform

Select Testsigma for unified web, mobile, API, desktop, SAP, and Salesforce coverage with minimal setup on cloud.

Connect Your Sources

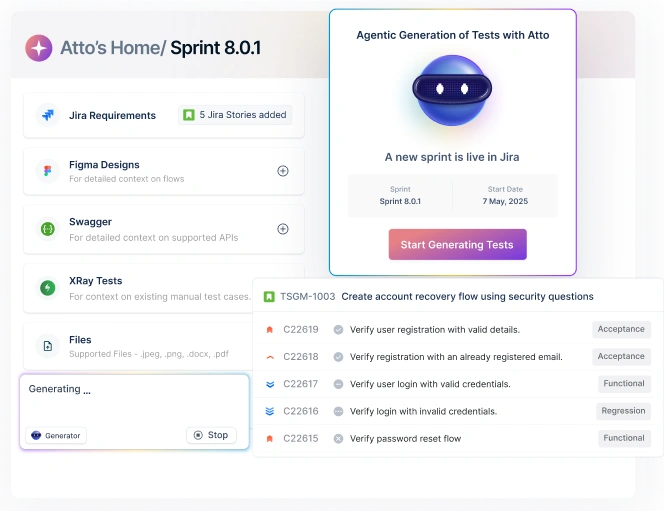

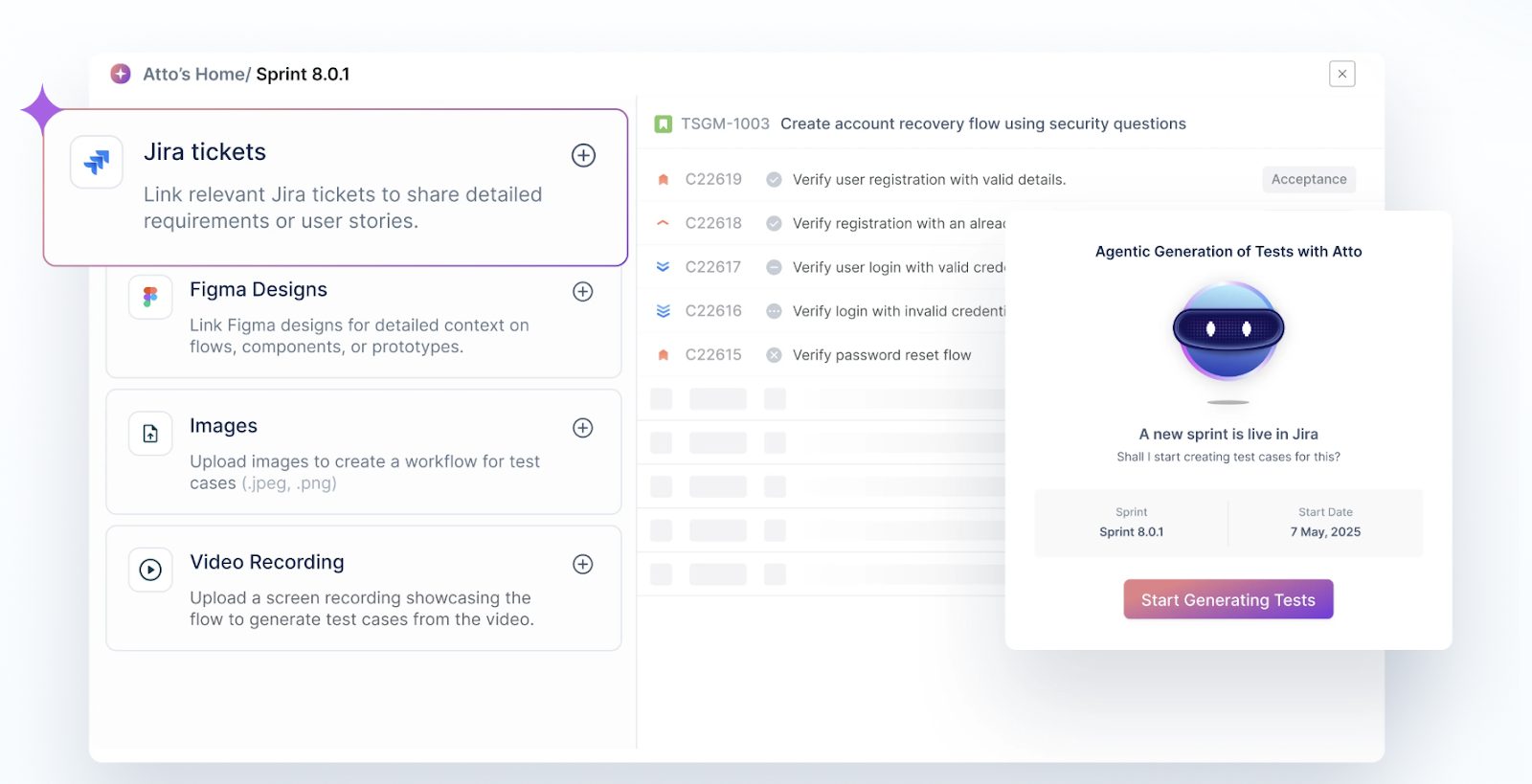

Link JIRA, GitHub, Figma, or upload requirements docs, screenshots, or videos directly into Testsigma.

See how Atto and its AI agents work in your workflow. Book a demo.

Invoke the Generator Agent

Type a plain English prompt or paste a user story. The Generator Agent outputs structured test cases with steps, expected results, and edge cases, no coding required.

Run the Optimizer Agent

Map generated tests against your application’s feature tree. It refines your test suite, removes redundancy, and ensures only high-impact tests run.

Validate and Refine

Review initial test outputs. Adjust coverage rules, add data-driven parameters, and approve cases for execution.

Integrate with CI/CD

Connect Testsigma to GitHub Actions, Jenkins, Azure DevOps, or CircleCI. Tests trigger automatically on every code push.

Let Self-Healing Run

Atto monitors each execution. When a locator or UI element changes, it auto-repairs the script with zero manual intervention.

Monitor and Iterate

The Analyzer Agent surfaces flaky test flags, root cause clusters, and coverage trends. Feed new requirements back into the Generator Agent to close emerging gaps.

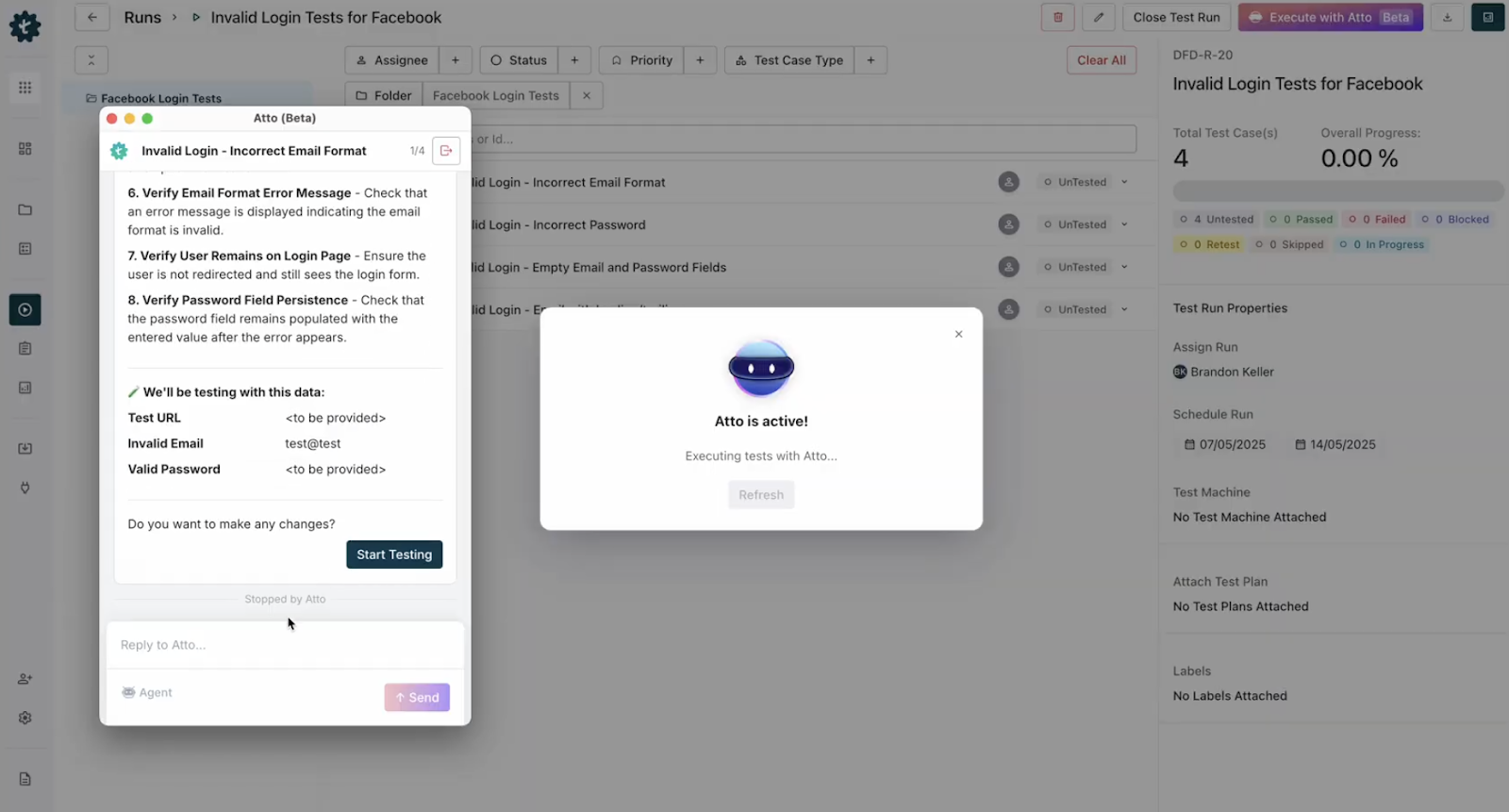

What GenAI Capabilities Does Testsigma Offer?

Testsigma brings seven GenAI capabilities into a single platform, covering every stage from test creation to CI/CD.

| Capability | How It Works | Business Impact |

| Test Generation from NL | Type prompts, upload Figma, JIRA tickets, videos, PDFs. Generator Agent creates test cases instantly | Eliminates scripting; any team member can author tests |

| Autonomous Testing Agents | Atto (AI coworker) deploys 7 specialized agents across plan, generate, optimize, execute, analyze, maintain, and report | Full QA lifecycle automation, not just test creation |

| Self-Healing & Maintenance | Maintenance Agent detects UI/API changes and repairs broken test scripts automatically | Up to 90% reduction in script maintenance overhead |

| Accelerated Defect Analysis | Analyzer Agent captures logs, screenshots, and root cause on failure, ready for immediate developer handoff | Cuts MTTR; faster sprint closure |

| Intelligent Test Optimization | Optimizer Agent monitors code changes, user behavior, and deployment status, re-prioritizes test execution accordingly | Max coverage in minimal CI/CD window |

| Real-Device Lab | Access 3,000+ real and virtual devices, browsers, and OS combinations for parallel cross-platform execution | Catches platform-specific bugs before release |

| Seamless CI/CD Integration | Native connectors to GitHub Actions, Jenkins, Azure DevOps, CircleCI, and 30+ additional platforms | Tests run on every commit; no manual trigger required |

What Are the Challenges of Generative AI in QA?

GenAI in testing is powerful, but it comes with real pitfalls that teams need to plan for upfront.

- Irrelevant test generation: LLMs can produce tests that look valid but miss your application’s context. Use high-quality inputs and a human review gate before tests enter the regression suite.

- Compute overhead: Enterprise-grade models need heavy infrastructure. Cloud platforms like Testsigma abstract that cost so teams pay for outcomes, not GPU hours.

- Training data quality: Poor input equals poor output. Clean, diverse test data is a prerequisite, not an afterthought.

- Interpreting AI results: AI failure reports can surface unfamiliar patterns. Testsigma simplifies this with plain-language root cause summaries and one-click bug filing.

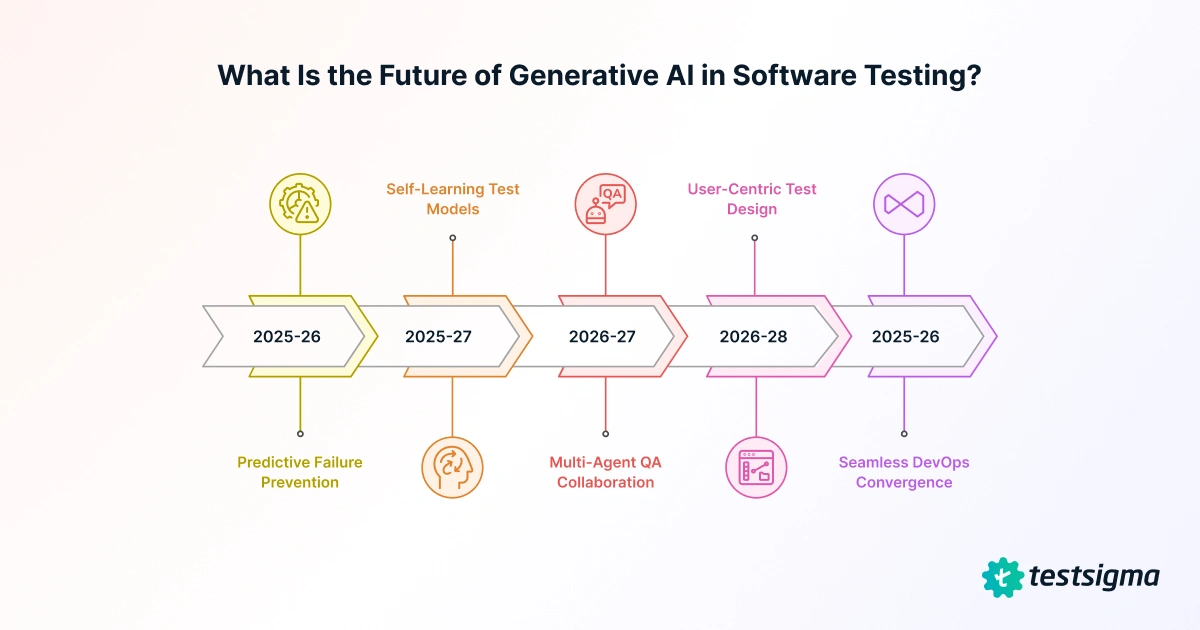

What is the Future of Generative AI in Software Testing?

According to Capgemini, 85% of the software workforce will use GenAI tools by 2025, with testing, design, and automation as the primary adoption areas. Here’s where the technology is heading:

| Trend | What It Means for QA Teams | Timeline |

| Predictive Failure Prevention | AI moves from self-healing broken tests to preventing failures before they occur, based on code change analysis and historical failure patterns | 2025–2026 |

| Self-Learning Test Models | Test suites that continuously retrain on new execution results, improving logic and adaptability without human intervention | 2025–2027 |

| Multi-Agent QA Collaboration | Specialized agents (UI, performance, security) share findings across a common memory layer and adjust strategies based on peer insights | 2026–2027 |

| User-Centric Test Design | Agents synthesize real session recordings and usage analytics to generate tests reflecting actual user journeys, not developer assumptions | 2026–2028 |

| Seamless DevOps Convergence | Always-on testing where AI continuously validates the application in production, not just pre-release | 2025–2026 |

Conclusion

Generative AI in software testing is no longer experimental; it is a production-grade capability delivering measurable ROI. Teams using GenAI testing tools report 30–40% productivity gains, up to 90% reduction in maintenance overhead, and test cycles compressed by a factor of 6–10x. As agentic AI platforms like Testsigma bring seven specialized agents to every phase of QA, from generation to reporting, the competitive gap between teams that adopt and teams that don’t will only widen.

The question is not whether GenAI will reshape your testing workflow. It already has for 85% of the software workforce. The question is how quickly you can make the transition work for your team.

Frequently Asked Questions

GenAI is used in automating various activities in the Software Development Life Cycle (SDLC) such as requirement gathering, design, development, software testing, and deployment. It not only automates but also enhances the efficiency of these tasks. Ultimately, the implementation of GenAI accelerates the speed of the entire SDLC.

There are many AI-driven test automation tools available, each offering different use cases. However, analyzing the recent trends of many enterprise users, Testsigma seems to be an ideal option for teams trying to automate and streamline their testing processes. This no-code platform can be used by testers with even zero programming experience.