Table Of Contents

- 1 Overview

- 2 What is a defect in software testing?

- 3 What are the types of defects?

- 4 How to log a defect?

- 5 What is Defect in Software Testing?

- 6 Types of Defects in Software Testing

- 7 Characteristics of a Good Software Defect

- 8 Defect Lifecycle (Bug Life Cycle) With Real-World Example

- 9 How to Log a Defect: Best Practices

- 10 Download the Defect Report Template

- 11 How to Prevent Defects in Software Testing

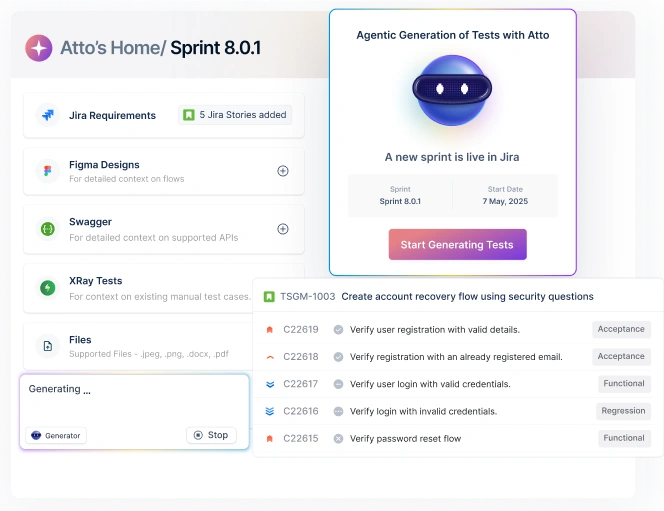

- 12 Managing Defects Using Test Automation Tools

- 13 Tracking and Reducing Defects in Software Testing

- 14 FAQs

Overview

What is a defect in software testing?

A defect in software testing is any flaw, gap, or deviation from the expected behavior of the software that can cause it to fail or perform incorrectly.

What are the types of defects?

- Functional defects: Features not working as expected.

- Performance defects: Slow response, crashes under load.

- Usability defects: Confusing or hard‑to‑use interfaces.

- Compatibility defects: Issues across devices, browsers, or OS.

- Security defects: Vulnerabilities that could be exploited.

- Logical and Calculation defects: Wrong formulas, incorrect logic.

- UI/UX defects: Misaligned elements, broken layouts, or inconsistent design.

How to log a defect?

- Defect title: A short, descriptive name.

- Pre‑conditions: What needs to be in place before testing.

- Steps to reproduce: Detailed actions to trigger the defect.

- Expected vs actual result: What should happen vs what happened.

- Attachments and logs: Screenshots, videos, or logs for context.

- Environment details: Device, OS, browser, or software version.

No matter how experienced the team or how robust the process, software defects are unavoidable.

Industry studies estimate that modern software projects contain multiple defects per thousand lines of code, and that developers spend nearly half their time identifying and fixing bugs. That alone explains why defects remain one of the most expensive and persistent challenges in software development.

A defect in software testing isn’t just a “bug” in the casual sense; it’s any deviation from expected behavior that can impact functionality, reliability, or user experience.

Ahead, we’ll unpack the meaning of defects, examine common types of defects, and look at how they emerge across the software lifecycle.

What is Defect in Software Testing?

A defect in software testing is simply a moment where the software doesn’t behave the way it was expected to. You click a button, and nothing happens. A calculation looks right but is slightly off. A feature works in staging and quietly fails in production.

These mismatches usually creep in due to requirement gaps, coding oversights, environment or configuration issues, or issues that arise when different systems are stitched together.

However, when people search for “what is a defect?,” it often gets mixed up with terms that sound interchangeable but aren’t. In software development, each of these words describes a different stage of the same problem. Here’s how they actually differ in practice:

| Term | What it really means | Example |

| Error | A human mistake during design or coding | A developer writes the wrong formula |

| Defect | The problem that ends up in the software | Tax is calculated incorrectly |

| Bug | An informal name used for a defect | “There’s a bug in checkout.” |

| Fault | The exact piece of faulty code | A + used instead of * |

| Failure | What the user experiences when it breaks | The checkout total is wrong at payment |

Types of Defects in Software Testing

You might assume a software defect is just one kind of problem, something broken and obvious. In practice, defects show up in many forms, affecting everything from core functionality to performance, security, and user experience.

Below are the most common categories testers watch for:

Functional Defects

When a feature doesn’t behave the way the requirements describe, you’re likely dealing with a functional issue. This could mean missing steps, incorrect outputs, or workflows that break in the middle.

Example: A payment goes through, but the order status never updates.

How testers detect them: By comparing application behavior against requirements, user stories, and acceptance criteria through functional and regression testing.

Performance Defects

Sometimes the system works – just not fast or reliably enough. Performance issues often surface only under real-world load or stress.

Example: Search results load instantly during testing, but slow to a crawl with thousands of users.

How testers detect them: Using load, stress, and endurance testing while monitoring response times and resource usage.

Usability Defects

Even when everything functions correctly, poor usability can still make a product frustrating. These defects affect how intuitive and effortless the experience feels.

Example: Users struggle to find the logout option even though it’s available.

How testers detect them: Through exploratory testing, usability sessions, and feedback from real users.

Compatibility Defects

Differences in browsers, devices, or operating systems can introduce unexpected behavior. What works in one environment may fail in another.

Example: A layout looks fine on a desktop but breaks on smaller mobile screens.

How testers detect them: By testing across multiple browsers, devices, OS versions, and screen resolutions.

Security Defects

Issues here don’t always announce themselves, but can have serious consequences if exploited. They weaken the system’s ability to protect data and users.

Example: An unauthenticated user can access restricted endpoints.

How testers detect them: Through security testing, vulnerability assessments, and penetration testing.

Logical and Calculation Defects

These defects stem from incorrect logic, formulas, or conditional handling in the code.

Example: Discounts apply correctly for one item but fail when multiple items are added.

How testers detect them: By validating edge cases, boundary values, and complex business rules.

UI/UX Defects

UI/UX defects include visual inconsistencies, layout issues, or interactions that don’t align with design expectations.

Example: Buttons overlap or text becomes unreadable on certain screens.

How testers detect them: Through visual testing, design comparisons, and cross-device UI reviews.

Learn more about the types of defects here

Characteristics of a Good Software Defect

A defect in software testing is never “good” by itself, but the way it’s reported can make a huge difference in how quickly and accurately it gets fixed.

A well-documented defect removes ambiguity, reduces back-and-forth, and helps teams move from detection to resolution with confidence. This is especially important when multiple defect types flow through the same tracking system.

A strong defect typically has the following characteristics:

- Clear and reproducible: The defect clearly states what happened, what was expected, and the exact steps to reproduce it.

- Proper severity and priority: Severity reflects the defect’s impact, while priority indicates how urgently it should be fixed. Correct classification helps teams focus on the defects that matter most.

- Linked to requirements or expected behavior: Tying a defect back to a requirement, user story, or acceptance criterion removes subjectivity and clearly explains why it is a defect.

- Supported with evidence: Logs, screenshots, videos, or test results provide concrete proof and technical context, making root-cause analysis faster and more accurate.

When defects are documented this way, they move faster through triage, fixing, and retesting, with far fewer handoffs and delays.

For a side-by-side breakdown, check the differences between priority and severity

Defect Lifecycle (bug Life Cycle) with Real-World Example

Once a defect in software testing is reported clearly, it moves through a defined workflow known as the defect or bug lifecycle. This lifecycle shows how a defect is tracked, from discovery to resolution, so everyone knows its current state and next action.

Let’s walk through the common stages using a simple example:

The checkout page incorrectly calculates the total price when a discount code is applied.

| Step | Status | What Happens | Example |

| 1 | New | The defect is logged by a tester | The incorrect discounted total is reported |

| 2 | Assigned | Ownership is given to a developer or team | Assigned to the payments developer |

| 3 | Open | The developer starts investigating | Pricing logic is reviewed |

| 4 | Fixed | Code changes are made | Discount calculation is corrected |

| 5 | Retested | Tester verifies the fix | Total price is now correct |

| 6 | Closed / Reopened | Defect is closed or sent back | Closed after pass, reopened if it fails |

| 7 | Deferred / Rejected | The fix is postponed or declined | Minor issue moved to future release |

A shared lifecycle gives teams a common language, so “what’s happening with this bug?” isn’t a recurring meeting question.

How to Log a Defect: Best Practices

We all know a defect goes through stages, from discovery to closure, but knowing what to put in your report makes all the difference. A vague report slows down fixes, while a clear one can get it solved in record time.

Here’s what to add to make your defect reports effective.

Defect Title

Start with a concise, descriptive title that captures the essence of the problem. Instead of writing “Button not working,” say something like “Profile picture upload fails when file size exceeds 5MB,” it immediately tells the team what feature and scenario are affected.

Pre-Conditions

Specify any setup required before the defect occurs.

For example, if a user tries to save a draft blog post but the “Save” button is greyed out, you would note that the user is logged in, has entered text in the editor, and is using the desktop web version. These details make reproduction straightforward.

Steps to Reproduce

Provide step-by-step instructions to recreate the issue, assuming no prior knowledge. For instance:

- Open the blog editor

- Enter at least 200 words

- Click “Save Draft”

- Observe that the save button is disabled

Writing the steps this way ensures anyone can follow them without guesswork.

Expected Vs Actual Result

Clearly explain what should happen versus what actually happens. Using the blog editor example: the expected result is that the draft saves successfully; the actual result is that the “Save Draft” button remains inactive, and the text isn’t stored.

Attachments and Logs

Include screenshots, screen recordings, or logs whenever possible. If the bug involves the mobile app crashing when opening a large image, a crash log from the device or a short video showing the app freeze provides invaluable context for the developer.

Environment Details

Specify the software and hardware context, including device, OS, app version, and network conditions. A bug that only happens on iOS 16 using the latest app version is very different from one that happens across multiple platforms.

Want to take this further? Read our detailed guide on writing a good bug report

Download the Defect Report Template

If you’re just getting started, don’t worry! Here’s a defect report template you can download and start filling out today. Every important detail is already set up for you.

How to Prevent Defects in Software Testing

Finding defects is important, but preventing them in the first place saves time, cost, and frustration. A proactive approach combines strong requirements, early testing, automation, and robust environments.

Here’s how teams can strategically reduce defects:

Shift-Left Testing

Shift-left testing is about testing earlier, while code is being written, so small issues don’t turn into bigger problems later.

For instance, if you’re building a payment module, writing unit tests while coding helps catch logic defects or calculation errors immediately, so they don’t propagate into other modules.

Strong Requirements Review

Defects often start on paper. Ambiguous or incomplete requirements are a major source of bugs. Review requirements carefully with developers, testers, and product owners to clarify expectations.

For example, defining exactly how a “Save Draft” feature should behave under multiple tabs or browser sessions prevents functional defects like drafts failing to save.

Automated Testing and Regression Coverage

Manual testing is slow and error-prone for repeated tasks. Implement automated testing to cover critical workflows and regression defects.

When a new product filter is added, automation runs checks to ensure sorting continues working correctly. It also verifies that search functionality is unaffected, helping catch defects in software testing before they reach production.

Continuous Integration/ci-CD

Integrate code frequently and run automated tests on every commit. A CI/CD pipeline can immediately flag defects, such as a form validation test failing after a UI tweak, preventing broken code from going live. This reduces the chance of defective code piling up and keeps releases stable.

Test DATA and Environment Management

Many defects in software testing are environment-specific. Maintain clean, realistic test data and a stable QA environment that mirrors production.

For example, if API permissions differ between staging and production, a feature that works in QA might fail in production. Careful environment management prevents these defects.

Managing Defects Using Test Automation Tools

Teams using automated testing boost defect detection by up to 90%!

Yep, that’s how powerful automation can be.

It spots patterns humans might miss, such as a button failing only in a specific browser or a report miscalculating totals under unusual input conditions.

Automatically generating defect logs and capturing screenshots or test evidence makes every defect reproducible and easier to fix.

This also shortens the defect lifecycle. Instead of waiting for testers to manually verify each workflow, issues are flagged immediately, giving developers instant feedback. Tools like Testsigma integrate seamlessly with CI/CD pipelines, helping teams track defects consistently without extra manual work.

Tracking and Reducing Defects in Software Testing

Sure, defects can be annoying, but they’re also little signals showing where your software could trip up in the real world. Spotting defect types early helps you focus on tricky areas, avoid repeating mistakes, and catch sneaky edge cases nobody expects.

Knowing what a defect looks like in different scenarios also makes it way easier to reproduce and fix, instead of chasing phantom bugs all day.

Automation makes this even smoother. Testsigma can automatically log defects, capture test evidence, and track issues across environments. That way, your team can focus on shipping reliable software rather than hunting down why things failed.

Test much faster with fewer defects using Testsigma – Start Testing Now

FAQs

A defect is any flaw in software, while a bug usually refers to a coding mistake that causes the defect.

Major categories include functional, performance, usability, compatibility, security, logical, and UI/UX defects.

Defects are prioritized based on severity, impact on users, and business requirements.

The defect life cycle is the process a defect goes through from discovery to closure, including stages like new, assigned, fixed, retested, and closed/reopened.